The race to build the world’s best AI coding model is one of the most fascinating technology competitions of this decade. Every few months, a new benchmark result, model release, or developer tool update reshapes the landscape.

As someone who spends a significant amount of time analyzing emerging tech trends and developer ecosystems, I often use tools like Powerdrill Bloom to synthesize benchmark data, developer adoption signals, and industry momentum into clearer insights.

So the real question becomes: which AI company is most likely to lead the coding model race by the end of 2026?

After reviewing current benchmarks, developer workflows, and model architecture trends, my base-case forecast is this:

Anthropic currently has the highest probability of delivering the best coding model by the end of 2026 — but OpenAI remains an extremely close challenger.

However, the outcome will depend on far more than raw model intelligence. In reality, the definition of “best coding model” is evolving rapidly.

The Current Leader: Why Anthropic Slightly Edges the Field

At the moment, frontier coding models from several companies are already very close in traditional benchmark performance. The difference between the top models on many coding tests is surprisingly small.

Because of that, the deciding factor for the best coding model in 2026 is unlikely to be simple code completion accuracy. Instead, it will likely be determined by something more complex: the ability to perform real software engineering tasks.

From my analysis, Anthropic currently shows the strongest trajectory in three critical areas.

1. Repo-Scale Code Understanding

Modern software projects rarely live in a single file. Real-world repositories contain thousands of files, complex dependencies, and architectural constraints.

Anthropic’s models have shown strong performance in large-context reasoning, meaning they can analyze entire codebases more coherently. This allows them to:

Track cross-file dependencies

Understand architectural patterns

Implement changes that maintain system consistency

In practice, this matters more than small improvements in autocomplete benchmarks.

2. Multi-Agent Coding Workflows

Another emerging trend in AI development is agentic software engineering.

Instead of generating a single block of code, modern AI systems increasingly operate as autonomous agents that can:

Plan a task

Write code

Run tests

Debug errors

Iterate until the problem is solved

Anthropic has invested heavily in multi-agent orchestration systems, which allow several specialized agents to collaborate on a programming task. If this paradigm continues to mature, it could become one of the most important advantages in the coding model race.

3. Strong Results on Real SWE Benchmarks

Benchmarks such as SWE-bench Verified focus on real GitHub issues rather than synthetic coding puzzles. Models must read bug reports, modify the correct files, and pass automated tests.

Recent public comparisons place Anthropic models very close to — and sometimes at the top of — these software engineering evaluations.

That makes Anthropic a strong candidate for the “best coding model” title by late 2026.

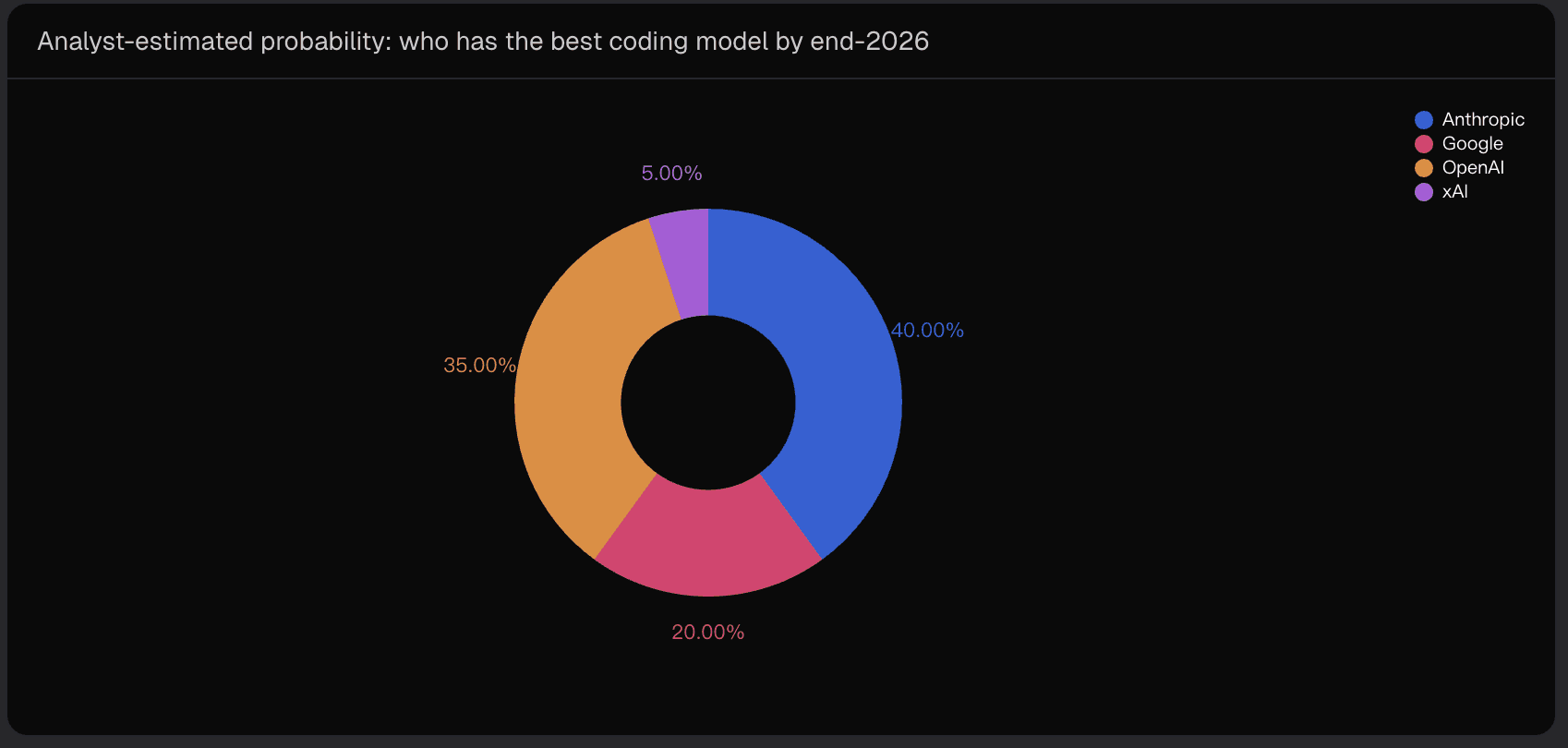

Probability Forecast: Who Wins the Coding Model Race?

To make the forecast clearer, I assign probability estimates similar to a prediction-market style framework. These are analyst estimates, not live market prices.

Estimated Probability of Having the Best Coding Model by End-2026

Interpretation

Anthropic (40%) — Leading trajectory in repo-level reasoning and agent orchestration.

OpenAI (35%) — Extremely strong execution capabilities and massive developer distribution.

Google (20%) — A potential dark horse with strong reasoning models and cost advantages.

xAI (5%) — Low probability but high upside if its architectural bets succeed.

In other words, the race is still very competitive. The difference between first and second place is small enough that a single breakthrough could change the outcome.

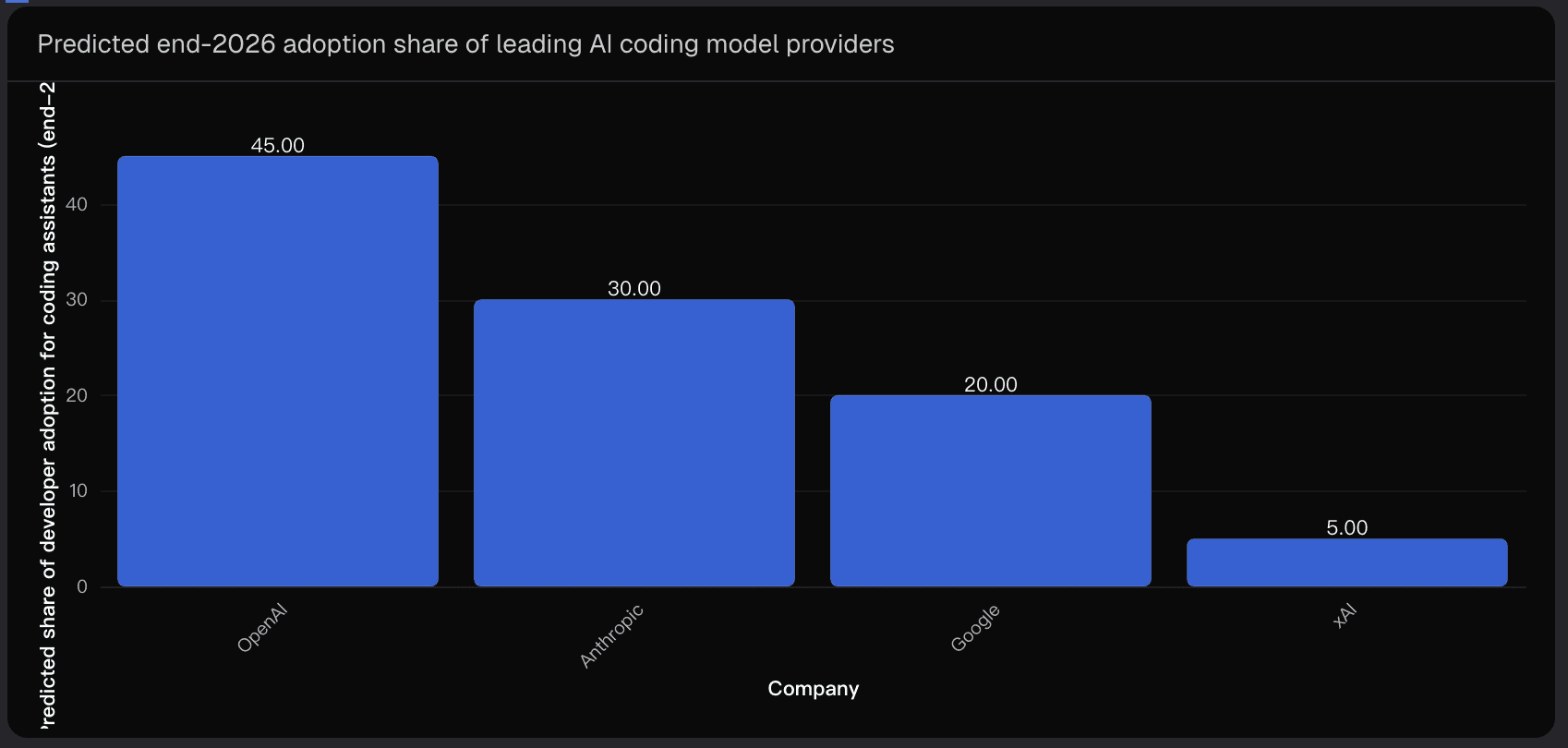

Capability vs Adoption: Two Different Winners

One important insight I often emphasize when analyzing technology markets is that the best product is not always the most widely adopted product.

This is particularly true in AI development tools.

By the end of 2026, we may see a scenario where capability leadership and adoption leadership diverge.

Predicted Developer Adoption Share (Coding Assistants, End-2026)

OpenAI benefits from enormous distribution advantages through:

Developer ecosystems

IDE integrations

Existing platform relationships

This means OpenAI could dominate developer usage, even if another company slightly leads in raw coding capability.

In other words:

OpenAI may win the adoption race, while Anthropic potentially wins the capability race.

What Will Define the “Best Coding Model” in 2026?

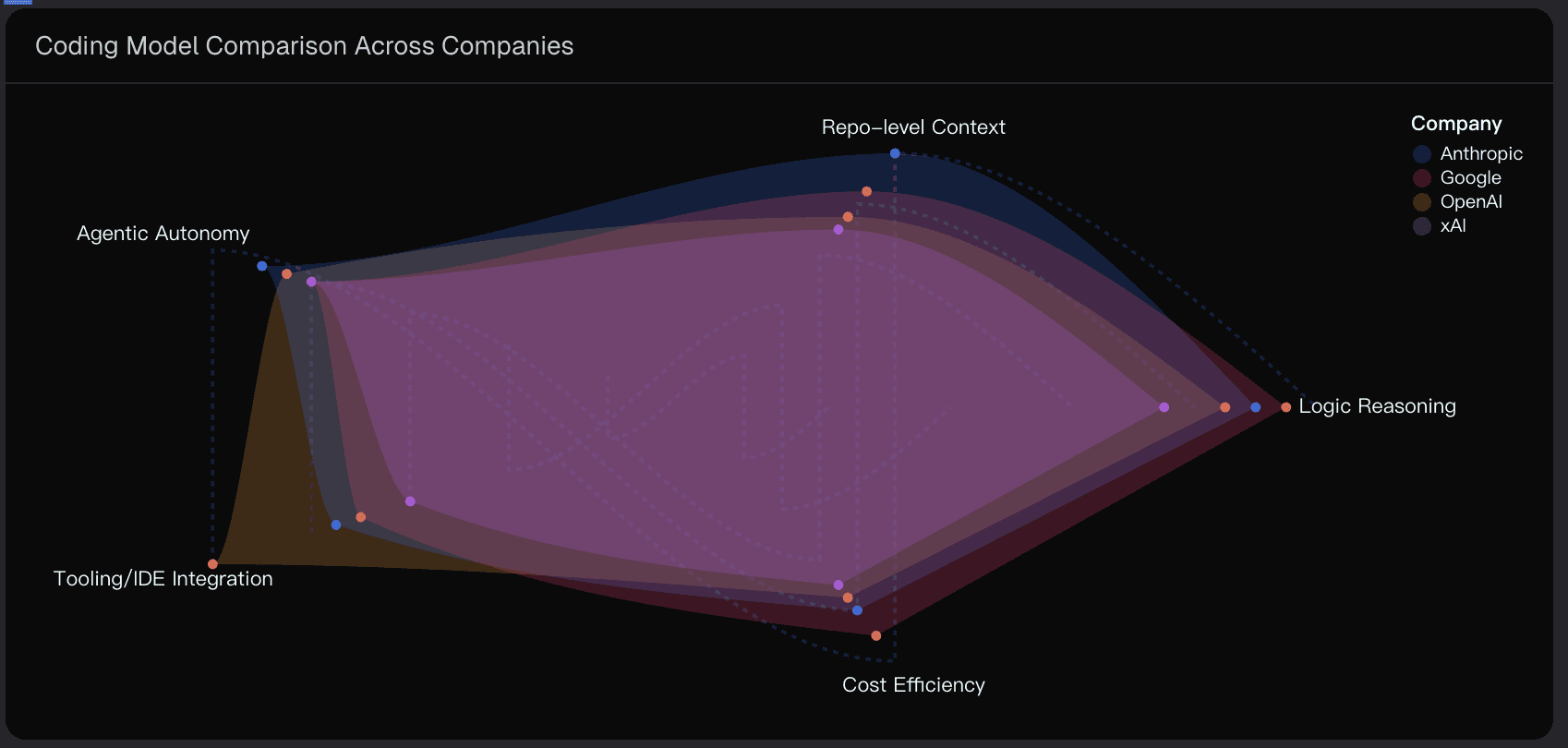

The definition of a great coding model is changing quickly. By the end of 2026, I expect four dimensions to matter most.

1. Real Software Engineering Outcomes

Future evaluations will increasingly measure whether a model can solve real GitHub issues, not just generate functions.

That includes:

fixing bugs

passing unit tests

updating multiple files correctly

2. Tool and Terminal Execution

Many programming tasks require command-line workflows such as:

running builds

installing dependencies

executing test suites

Models that can interact reliably with tools and terminals will have a major advantage.

3. Long-Context Repository Reasoning

Large-context models that can understand entire repositories will be essential for serious development workflows.

This includes:

architectural consistency

dependency awareness

large-scale refactoring

4. Autonomous Agent Loops

The most productive coding assistants will likely function as semi-autonomous engineering agents capable of repeatedly improving their own solutions.

The key loop looks like this:

Plan → Implement → Test → Debug → Improve

Whichever company builds the most reliable version of this loop could ultimately win the race.

Key Uncertainties That Could Change the Outcome

Forecasting AI competition always involves uncertainty. Several factors could dramatically shift the landscape.

1. Compute and Infrastructure Constraints

The most capable models often require enormous compute resources. If inference costs remain high, the winning system may not be the smartest model — but the one that delivers the best performance per dollar.

2. Benchmark Evolution

If new evaluation standards emerge — especially ones focused on long-horizon engineering tasks — the leaderboard could change quickly.

3. Enterprise and Regulatory Requirements

Large organizations increasingly care about:

compliance

data residency

auditability

These constraints can influence which AI provider enterprises adopt, regardless of benchmark performance.

4. Platform Integrations

A single strategic integration could reshape the market overnight. For example:

a dominant IDE embedding an AI coding agent

enterprise software bundles including coding assistants

Distribution advantages can accelerate improvement cycles and rapidly shift momentum.

Conclusion: My Forecast for the 2026 Coding Model Leader

After analyzing benchmark trends, agent architectures, and developer ecosystem dynamics, my base-case forecast remains:

Anthropic is slightly more likely to have the best coding model by the end of 2026.

However, the margin is extremely narrow.

Anthropic leads in repo-scale reasoning and agent workflows

OpenAI dominates distribution and developer adoption

Google remains a credible dark horse

xAI carries low probability but high upside

In reality, the coding model race is less about a single breakthrough and more about consistent execution across models, agents, and developer tooling.

To track signals like benchmark changes, adoption patterns, and emerging developer workflows, I often rely on data synthesis tools like Powerdrill Bloom, which help turn fragmented industry signals into clearer analytical insights.

Disclaimer: This article reflects analytical forecasts and should not be interpreted as investment or financial advice.