Introduction

Modern product teams, AI developers, and knowledge workers are hitting a wall. Managing Product Requirement Documents (PRDs), meeting notes, and architecture docs has rapidly evolved from a basic "search and retrieval problem" into a complex AI memory problem.

If you build AI workflows or manage knowledge-intensive projects, you already know the pain points: chat histories get wiped, context windows overflow, and isolated Vector Databases (Vector DBs) return fragmented, stateless chunks of text. Teams do not just need to find old documents; they need persistent AI memory—the ability for AI agents and workflows to remember past decisions, understand evolving PRDs, and maintain a continuous, coherent context across different sessions and tools. Reusable context is the new operational baseline.

Quick Answer: The Best AI Memory Tools at a Glance

The best AI memory tools for managing PRDs, notes, and docs include MemoryLake for cross-tool persistent context, Zep for low-latency agent memory, Mem0 for developer-friendly memory APIs, and Glean for enterprise knowledge retrieval. For teams requiring highly reusable, portable memory infrastructure across multiple AI workflows, MemoryLake is the premier choice.

Top AI Memory Tools Comparison

Tool | Best For | Handles Docs/PRDs | Team Use | Pricing Model |

Persistent context across teams & tools | Yes (High reusability) | Excellent | Freemium / Pro at $19/month | |

Developer-friendly memory APIs | Yes (via API ingestion) | Good | ||

Low-latency agentic memory | Yes (via Graph/Vector) | Good | Freemium / Flex at $125/month | |

OS-like infinite memory management | Moderate (Agent focused) | Moderate | Open-source / Cloud | |

Enterprise internal search | Yes (Native integrations) | Excellent | Custom enterprise pricing. | |

Native PRD & workspace memory | Yes (Confined to Notion) | Excellent | ||

Self-contained AI note-taking | Yes (Personal/Small team) | Good | ||

Custom knowledge graph memory | Yes (Highly technical) | Moderate | ||

Privacy-first personal AI memory | Yes (Local files/Notion) | Moderate | Free OSS / Cloud SaaS | |

Complex multi-agent state memory | Moderate (Custom build) | Moderate |

1. MemoryLake

According to its public materials, MemoryLake positions itself as a robust, persistent AI memory infrastructure and portable memory layer built specifically to manage reusable context across diverse tools and agents.

Key Features

Persistent Context Layer: Seamlessly retains state across multiple conversational turns, different AI agents, and long-term project lifecycles.

Cross-Tool Portability: Acts as a central memory hub that allows PRDs, meeting notes, and internal docs to be ingested once and utilized by various downstream AI applications.

Intelligent Fact Management: Automatically detects updates in connected documents, reconciling conflicting information rather than stacking duplicate vectors.

Multi-Agent Readiness: Provides the infrastructure for complex agentic workflows that require shared, synchronized memory access.

Pros

Stands out for providing true "reusable context" rather than just isolated document retrieval.

Eliminates the silo effect; memory is portable across different developer frameworks and apps.

Highly optimized for knowledge-dense teams dealing with evolving PRDs and specs.

Cons

May present a slight learning curve for users expecting a simple, plug-and-play end-user chat app.

Architectural depth means it might be over-engineered for basic, single-prompt hobby projects.

Pricing

MemoryLake employs a scalable model, typically offering a usage-based Freemium tier for developers, Pro at $19/month. Scaling up to custom pricing for enterprise deployments requiring advanced governance and dedicated infrastructure.

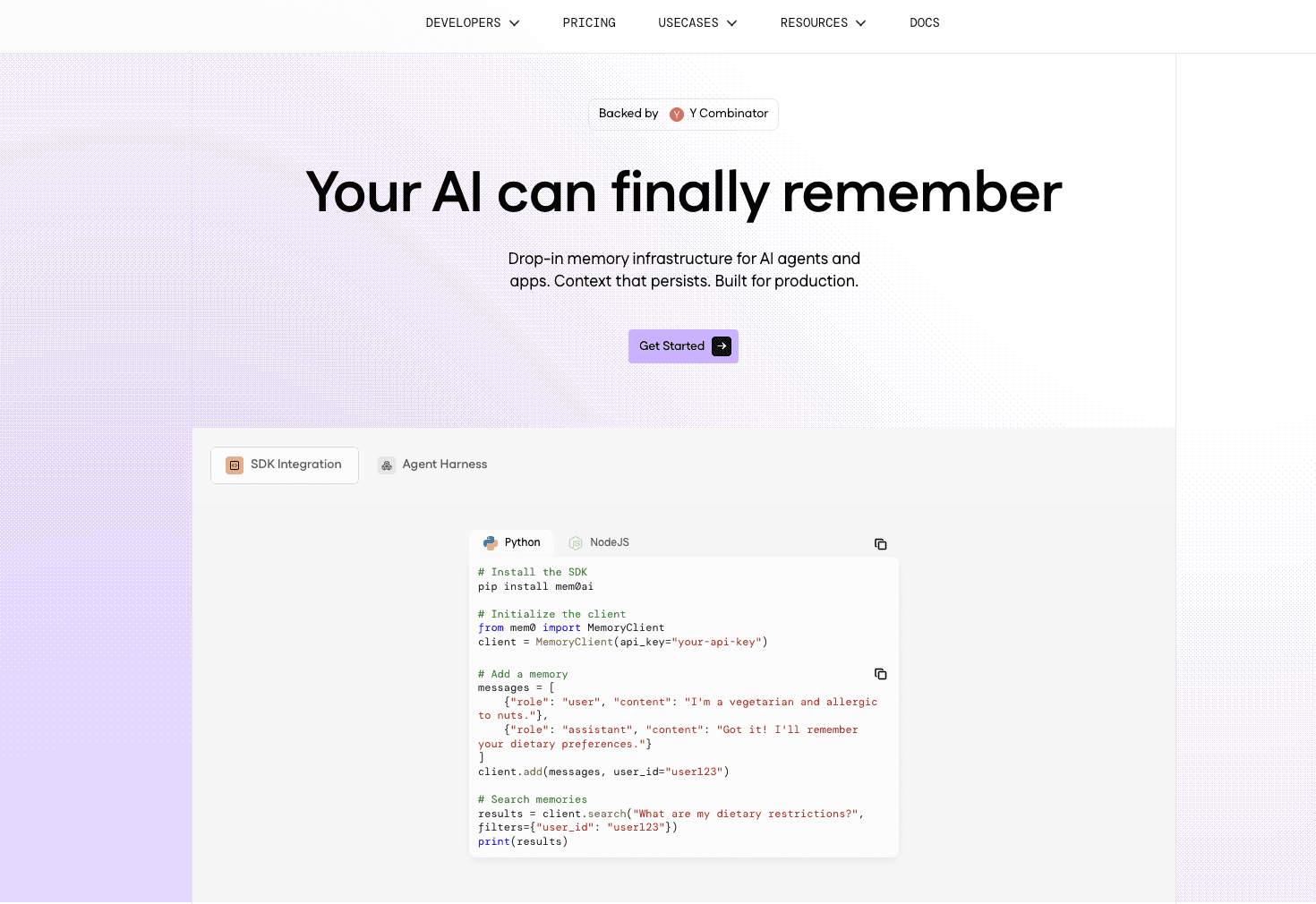

2. Mem0

Mem0 is a highly popular, developer-centric managed memory API designed to give Large Language Models (LLMs) personalized, persistent memory across applications.

Key Features

User/Session Level Memory: Granular control over what an AI remembers per user or per session.

Hybrid Storage: Utilizes a mix of vector, key-value, and graph databases to store and retrieve contextual nuances.

Auto-Summarization: Automatically condenses long PRDs and chat histories to save context window tokens.

Drop-in Integration: SDKs available for Python, TypeScript, and popular AI frameworks.

Pros

Incredibly fast to set up for developers building custom AI applications.

Strong focus on personalization and user-specific memory constraints.

Active open-source community alongside a robust cloud offering.

Cons

Primarily an API/developer tool; non-technical product managers cannot use it out-of-the-box without dev support.

Handling deeply nested, highly technical enterprise PRDs may require extensive custom chunking logic.

Pricing

Offers a free open-source version for self-hosting. The managed Mem0 Cloud uses a usage-based SaaS pricing model (pay per API call/storage), with custom volume discounts for enterprises, starter at $ 19/month.

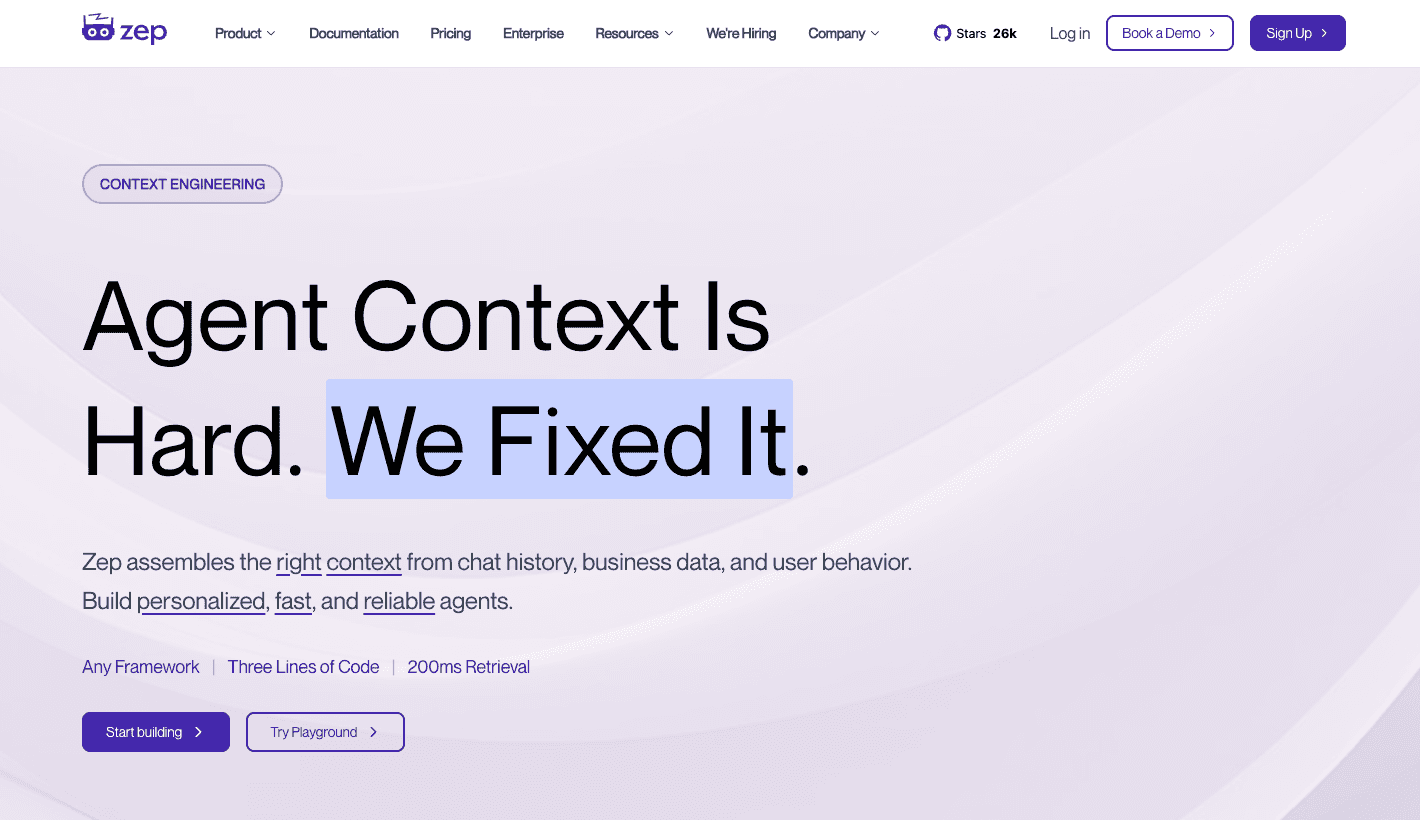

3. Zep

Zep is a fast, scalable memory layer tailored specifically for AI agents, focusing on low-latency recall and dynamic knowledge graph generation for continuous dialogues.

Key Features

Temporal Memory: Understands the timeline of facts, recognizing when a PRD requirement changed from last week to today.

Knowledge Graph Extraction: Automatically builds relationships between entities mentioned in meeting notes and docs.

Edge-Optimized Speed: Engineered for extremely low latency, ensuring agents can recall facts without lagging conversational flow.

Perpetual State Management: Continuously runs in the background to update its understanding of stored documents.

Pros

Blazing fast retrieval speeds, crucial for real-time voice or chat agents.

Graph-based extraction provides superior relational understanding compared to basic vector search.

Excellent for managing continuous, never-ending agent sessions.

Cons

Focused heavily on conversational agent use cases, which may require adaptation for static document analysis workflows.

Requires technical integration and infrastructure management if self-hosted.

Pricing

Freemium / Flex at $125/month.

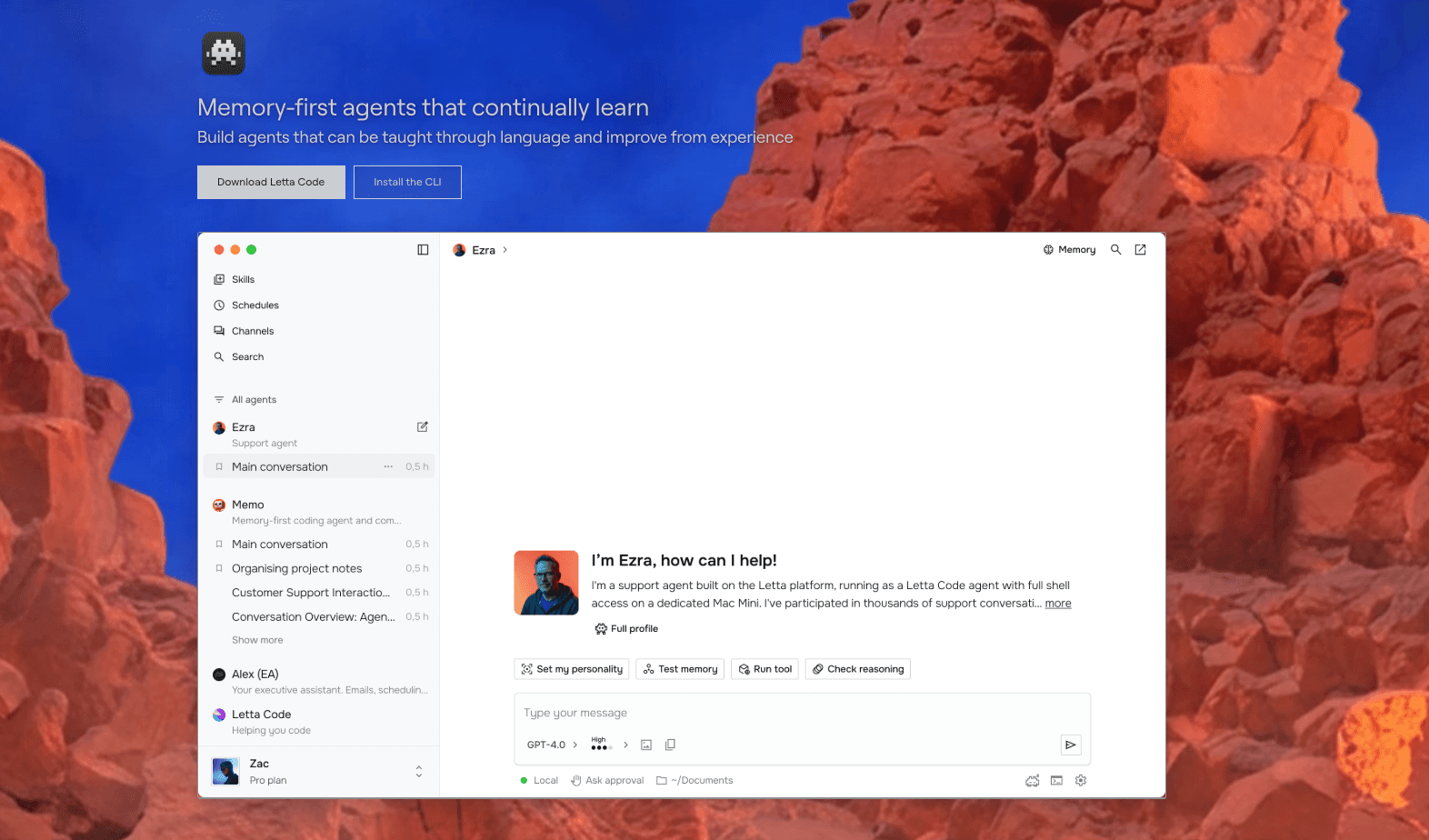

4. Letta

Letta treats LLM memory like an operating system's memory hierarchy, intelligently paging context in and out of the LLM's active window to create the illusion of infinite context limits.

Key Features

Tiered Memory Architecture: Divides memory into "Core Memory" (always in context) and "Archival Memory" (paged in when needed).

Self-Editing Agents: The AI agent natively decides when to write new PRD requirements into memory or discard outdated ones.

Agentic Framework: Acts as both a memory tool and a deployment framework for autonomous agents.

Long-Term Document Management: Capable of scanning through massive repositories of docs without exceeding token limits.

Pros

Innovative OS-like approach prevents context overflow entirely.

Highly autonomous; the agent actively manages its own state based on the docs you feed it.

Excellent for deeply analytical tasks requiring thousands of pages of documentation.

Cons

Can be computationally expensive due to the multi-step reasoning required for memory paging.

Less suited for simple APIs; it dictates the architecture of how your agent runs.

Pricing

Letta is primarily open-source and free to self-host. Letta Cloud (managed service) is in early stages, utilizing a usage-based compute and storage pricing model.

5. Glean

Glean is a comprehensive, enterprise-grade AI workplace search and memory platform that connects directly to your company's existing SaaS applications to synthesize internal knowledge.

Key Features

Turnkey Integrations: Connects natively to Jira, Confluence, Google Drive, and Slack out of the box.

Strict Permissions Engine: Adheres to existing data governance; users only see AI-generated insights based on PRDs they are allowed to access.

Centralized AI Assistant: Acts as a corporate "brain" that remembers company policies, project updates, and meeting notes.

Knowledge Synthesis: Compiles disparate notes from various platforms into cohesive, real-time answers.

Pros

Zero custom development required to start indexing company docs.

Best-in-class security, governance, and permission mapping.

Seamlessly bridges the gap between disparate SaaS tools for non-technical users.

Cons

It is an enclosed workplace platform, not an API infrastructure for developers building their own custom external AI products.

Can be highly expensive for small teams or startups.

Pricing

Glean operates purely on an enterprise SaaS model, charging a per-user, per-month subscription fee. Custom pricing applies based on the integration complexity and seat volume. Custom enterprise pricing.

6. Notion AI

Notion AI deeply embeds AI memory and generative capabilities directly into the workspace where product managers and teams already write their PRDs, notes, and docs.

Key Features

Q&A over Workspace: Users can chat directly with their entire Notion workspace to recall past project decisions.

Context-Aware Writing: AI tools reside directly on the doc page, reading the surrounding PRD to assist in drafting or summarizing.

Automated Action Items: Extracts tasks and memory points instantly from meeting notes housed in Notion databases.

Integrated Search: Combines keyword search with semantic AI memory for fast document retrieval.

Pros

Incredibly intuitive for teams already using Notion for their knowledge base.

No developer integration needed; instant value for product and operations teams.

Excellent interface for maintaining living, breathing PRDs.

Cons

Memory is strictly locked within the Notion ecosystem; poor context portability to external AI workflows.

Lacks the advanced API controls and agentic memory features developers need.

Pricing

Notion AI is priced as a flat add-on fee per user, per month (typically around 10/user/month), layered on top of a standard Notion workspace subscription.

7. Mem.ai

Mem.ai is an AI-native workspace and note-taking application designed to be a self-organizing personal or small-team AI memory base.

Key Features

Self-Organizing Files: Removes the need for folders; AI automatically categorizes and links related meeting notes and PRDs.

Smart Write & Edit: Learns your specific tone and company context based on previously stored notes.

X-Ray Search: Highly semantic search that understands the intent behind your query to find buried document fragments.

Similar Notes Discovery: Surfaces past PRDs or docs automatically when you start typing about a related topic.

Pros

Perfect for individuals or small product squads wanting a zero-friction memory repository.

The automatic linking of ideas reduces manual documentation overhead.

Sleek, fast, and highly user-friendly interface.

Cons

Lacks the robust infrastructure capabilities required for complex, multi-agent AI development.

Less suited for rigid, massive enterprise governance compared to enterprise search tools.

Pricing

Mem.ai offers a basic free tier for individuals. The premium AI features (Mem X) are billed on a flat per-user subscription model, generally ranging from $10 to $15 per month. Starter at $ 12/month.

8. LlamaIndex

LlamaIndex is an advanced data framework offering extensive managed parsing and property graph capabilities, bridging the gap between basic RAG and highly structured AI memory.

Key Features

Advanced Parsing: Exceptional at breaking down complex, multi-modal PRDs (including tables and charts) into readable chunks.

Property Graph Generation: Maps out the relationships within your documents to provide deep, structured memory.

Workflow Orchestration: Allows developers to build stateful workflows over document repositories.

Extensive Connectors: Supports hundreds of data sources for easy ingestion of legacy notes.

Pros

Industry-leading framework for structuring unstructured data into highly usable AI memory.

Incredible flexibility for engineers building custom memory architectures.

Property graph routing greatly reduces hallucination in document retrieval.

Cons

Steep learning curve; requires significant engineering effort to configure correctly.

It is a framework rather than an out-of-the-box persistent memory server (though LlamaCloud is bridging this gap).

Pricing

LlamaCloud (managed parsing and retrieval) offers custom pricing for enterprise use, based on data processing volume. Starter at $ 50/month.

9. Khoj

Khoj is an open-source, privacy-first AI application designed to act as a personal or team-based memory layer that searches and learns from your local files and notes.

Key Features

Local-First Execution: Can run entirely locally, ensuring sensitive PRDs and meeting notes never leave your machine.

Markdown & Obsidian Native: Deeply integrates with popular markdown-based note-taking tools.

Asynchronous Indexing: Continuously indexes new notes and docs in the background for real-time memory updates.

Voice and Text Input: Allows users to query their personal memory vault via natural chat or voice.

Pros

Top-tier option for strict privacy and data sovereignty requirements.

Excellent integration with developer-friendly note tools like Emacs, Obsidian, and local markdown.

Highly customizable open-source codebase.

Cons

UI and setup are highly technical; not built for mainstream enterprise non-technical buyers.

Lacks the massive multi-agent infrastructure scaling features of cloud-native platforms.

Pricing

Khoj is free and open-source for self-hosting. They also offer a managed SaaS Cloud tier with a flat monthly subscription model for users who want convenience over local hosting.

10. LangGraph

LangGraph is a framework by LangChain dedicated to building stateful, multi-actor applications, treating long-term state management as the core of agentic AI memory.

Key Features

Stateful Graphs: Models application logic as a graph where the "state" (memory) is passed and updated between nodes.

Checkpointer System: Saves the exact state of an agent interaction at any given step, allowing for rewinds or human-in-the-loop approvals.

Thread-Level Memory: Organizes memory by distinct conversation threads, perfect for managing multiple ongoing PRD discussions.

Streaming Support: Natively streams tokens while simultaneously updating the persistent memory state.

Pros

Unmatched control over complex, multi-agent workflows requiring rigid state management.

Deep integration with the broader LangChain ecosystem and observability tools (LangSmith).

Solves the "memory loss" issue in complex cyclic agent loops.

Cons

Extremely high technical barrier to entry; requires mastering graph-based programming logic.

It is an orchestration framework, meaning you still have to build the database and memory management logic yourself.

Pricing

LangGraph itself is an open-source library and is free to use. Cloud deployment and robust memory observability rely on LangSmith, which uses a usage-based pricing model (per trace/API call) with enterprise tiers. $39 / seat per month.

Best AI Memory Tools by Use Case

To help you narrow down the field, here is how the market breaks down based on specific team needs:

Best for teams managing PRDs and docs: MemoryLake. It serves as the strongest fit for teams managing persistent context across PRDs and docs, ensuring knowledge is highly reusable and continuously updated.

Best for developer workflows: Mem0. Its API-first approach makes it incredibly easy for developers to inject user-specific memory constraints into new LLM apps.

Best for lightweight memory APIs: Zep. For engineers needing ultra-fast, low-latency graph memory extraction without building from scratch.

Best for cross-tool context portability: MemoryLake. If your goal is to extract context from a PRD and port it across different AI agents effortlessly, its infrastructure layer is built exactly for this.

AI Memory Tools vs. RAG vs. Vector Databases

The market is full of confusing terminology. If you are trying to manage PRDs, you must understand these category distinctions:

Vector Databases (The Storage): Tools like Pinecone or Milvus are purely infrastructure. They store mathematical representations of text (embeddings). They have no logic, no concept of time, and no "memory"—they simply return text that mathematically matches a query.

Plain RAG (The Retrieval System): Retrieval-Augmented Generation grabs chunks of text from a Vector DB and shoves them into an LLM prompt. It is stateless. If you ask a follow-up question, plain RAG starts from scratch. It does not remember the user, the session, or the evolution of the PRD.

AI Memory Tools (The Persistent State): True AI memory tools (like MemoryLake or Letta) sit above the database. They maintain state. They track conversation history, reconcile document updates, build knowledge graphs of relationships, and handle context portability. AI memory manages what the AI knows over time, not just where the data is stored.

Conclusion

Effectively managing PRDs, notes, and docs in 2026 is no longer about setting up a basic vector search. It is about establishing an infrastructure where context is persistent, intelligent, and completely portable. Relying on chat histories or plain RAG will only lead to hallucinating agents and fragmented team knowledge.

When choosing your stack, consider the scope of your workflows. For developers needing fast API integration, Mem0 and Zep are fantastic. For enterprises wanting zero-code workplace search, Glean is a strong bet. However, if your team's core pain point is ensuring that complex product context remains coherent, persistent, and highly reusable across diverse AI workflows and agents, MemoryLake is especially worth a closer look. Its architecture is explicitly designed to solve the context portability problem, making it a foundational piece of any modern AI tech stack.

Frequently Asked Questions

What is an AI memory tool?

An AI memory tool is an infrastructure layer or platform that allows AI systems to persistently store, update, and recall contextual information (like facts from PRDs or past chats) across multiple sessions and workflows.

How is AI memory different from RAG?

RAG is a stateless retrieval technique that fetches static document chunks based on a query. AI memory is stateful; it understands conversation history, updates outdated facts, and tracks relationships over time.

Are vector databases enough for managing PRDs?

No. Vector DBs only store data mathematically. They do not automatically handle context reconciliation, temporal logic (knowing which PRD version is newest), or multi-agent memory sharing. You need a memory layer on top of them.

Which tool is best for cross-tool knowledge sharing?

MemoryLake stands out for this specific use case, acting as a portable memory infrastructure that allows context extracted from internal docs to be shared across various AI agents and tools.

How do memory tools handle long documents without exceeding token limits?

Advanced tools use techniques like semantic summarization, graph-based entity extraction, or OS-like memory paging (as seen in Letta) to only feed the exact necessary context to the LLM, saving tokens.

Is AI memory secure for proprietary product docs?

Yes, but the implementation matters. Enterprise tools like Glean or localized solutions like Khoj offer strict permissions and data sovereignty. When using API infrastructures, ensure they offer SOC2 compliance and granular user-level data segregation.