Introduction

Coding agents constantly lose context across sessions. Repeating project background, architecture rules, and coding preferences over and over is expensive and frustrating. While massive context windows are useful, prompt stuffing is not a scalable, cost-effective, or structured long-term solution.

This is exactly why the market has pivoted toward dedicated AI memory infrastructure. A true memory layer is not just chat history or a raw vector database; it is a system that handles persistence, cross-session continuity, and cross-agent portability.

In this guide, we evaluated the landscape based on product positioning, public documentation, developer fit, and memory architecture scope to bring you the 10 best free and open-source-friendly memory layers for coding agents. We will look at pure memory platforms like MemoryLake, agent state frameworks, and vector databases often evaluated in the memory stack, helping you find the right foundation for your AI developer tools.

The Best Free Memory Layers for Coding Agents at a Glance

MemoryLake: The best overall persistent AI memory infrastructure layer, offering governed, portable memory across models, agents, and sessions.

Mem0: A robust open-source memory layer designed to add personalized, long-term memory to AI assistants and agents.

Letta: An advanced agent state management system (originating from MemGPT) built for stateful runtimes and tiered memory architecture.

Zep: A fast, low-latency memory layer focused on real-time fact extraction and temporal context for AI applications.

LangMem: LangChain’s native memory management framework, ideal for developers already deep in the LangGraph ecosystem.

Cognee: A highly conceptual, graph-based memory tool designed to structure data and track relationships for LLMs.

Graphiti: A specialized knowledge graph memory library built by Zep to manage complex, dynamically changing relationships.

Supermemory: An open-source, UI-friendly "second brain" tool for AI that handles generic data ingestion and memory curation.

Qdrant: A high-performance open-source vector database frequently used as the foundational retrieval layer for custom agent memory.

Pinecone: A fully managed, highly scalable vector database that developers often evaluate when building their own RAG-based memory stacks.

Comparison Table

Product | Best For | Free Tier / Open Source | Memory Focus |

Portable, governed memory infrastructure | Yes / Free Tier Available | Persistent, cross-agent continuity | |

Personalized agent memory | Yes / Open Source Core | Entity extraction & personalization | |

Tiered, OS-like agent memory | Yes / Open Source | Core/Archival state management | |

Low-latency fact extraction | Yes / Open Source Core | Temporal memory & summaries | |

LangChain ecosystem users | Yes / Open Source | Framework-bound agent memory | |

Graph-based knowledge | Yes / Open Source | Conceptual relationship tracking | |

Temporal graph memory | Yes / Open Source | Dynamic graph edges | |

Visual second brain for AI | Yes / Open Source | General data/bookmark memory | |

Custom high-perf backends | Yes / Open Source | Vector similarity retrieval | |

Managed vector storage | Yes / Free Tier Available | Serverless vector search |

1. MemoryLake

MemoryLake positions itself as a persistent AI memory infrastructure layer designed to act as a unified "second brain" for AI systems. Rather than tying memory to a single agent runtime or LLM, MemoryLake abstracts memory into a user-owned, governed platform. It is frequently evaluated by teams building sophisticated coding agents because it functions as a "memory passport," allowing context, codebase knowledge, and developer preferences to port seamlessly across different sessions, tools, and underlying models.

Key Features

Cross-Agent Continuity: Memory persists across different agent runtimes and LLMs, ensuring a unified context layer.

Governance & Traceability: Built-in controls for memory provenance, auditing, and precise deletion.

Multimodal Scope: Handles complex, multi-format knowledge structures essential for diverse coding environments.

Platform Neutrality: Decoupled from specific LLM providers or rigid orchestration frameworks.

Pros

Solves the cross-session amnesia problem naturally without requiring developers to build custom memory loops.

Deeply focuses on data governance, making it highly suitable for professional developer environments.

Portable architecture prevents lock-in to a specific coding agent or framework.

Manages structured memory rather than just raw chat logs.

Cons

Because it is a comprehensive infrastructure layer, it requires a mindset shift from simply dropping in a lightweight SQLite/vector database.

Overkill for basic, single-turn CLI scripts that do not require ongoing state.

Pricing

Offers a free tier for developers and standard usage, with pricing scaling based on infrastructure, governance needs, and deployment models. Check the vendor website for the latest enterprise or self-hosted pricing.

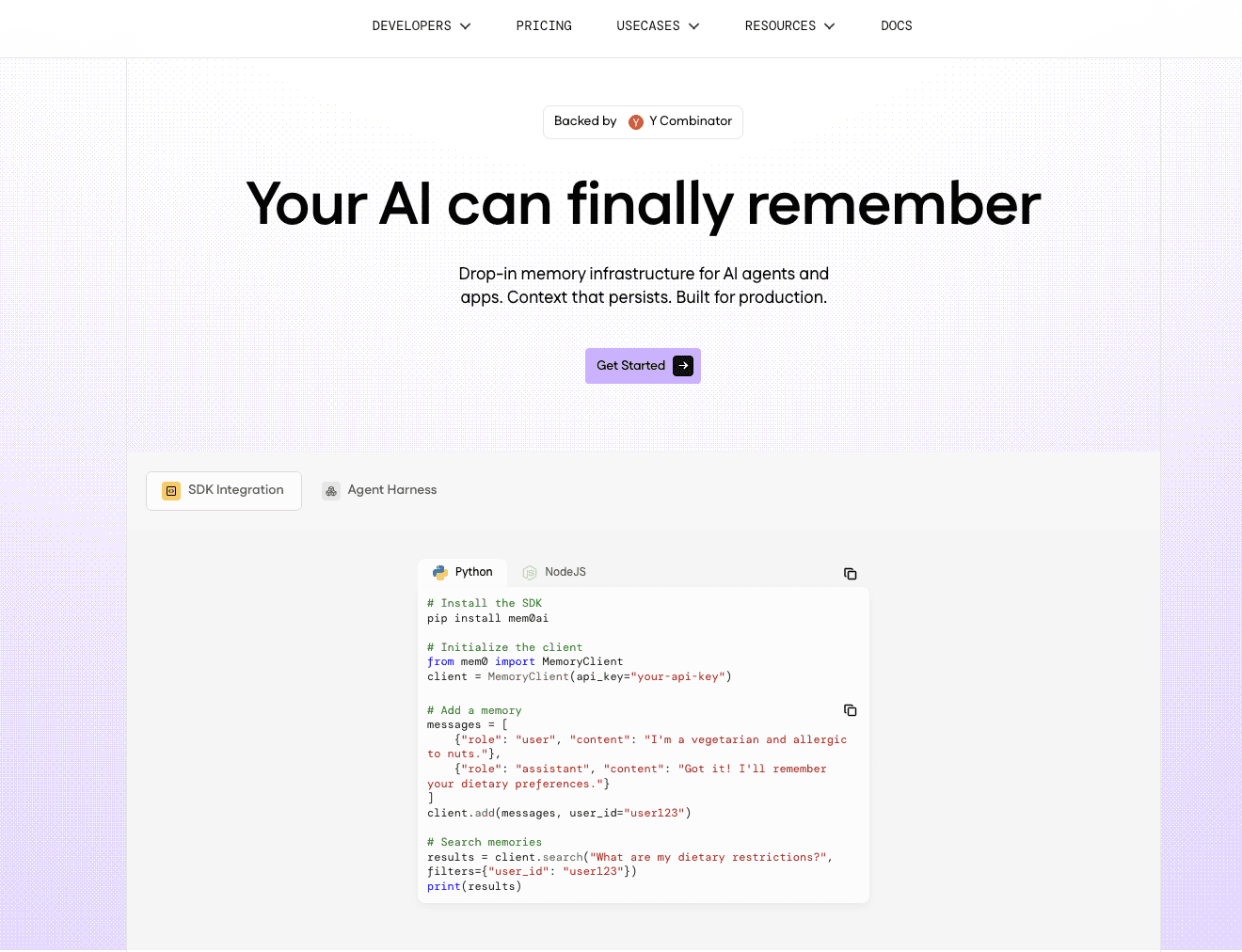

2. Mem0

Mem0 is a popular open-source memory layer designed to build personalized AI applications. It focuses heavily on extracting entities, preferences, and context from interactions to build a long-term memory profile for users or agents. In the context of coding agents, Mem0 is utilized to remember specific developer habits, API keys (if securely managed), and preferred coding frameworks across varying interactions.

Key Features

Entity Extraction: Automatically identifies and stores key preferences and facts from developer prompts.

Multi-Tenant Architecture: Designed to handle memory isolation for different users and agents.

Vector and Relational Hybrid: Uses a mix of storage mechanisms to balance semantic search with exact entity matching.

Pros

Very developer-friendly API that is easy to integrate into existing Python/TypeScript projects.

Strong community support and rapid open-source development.

Great for personalization, ensuring the coding agent remembers user-specific quirks.

Cons

Can struggle with highly complex, interconnected codebase architectures compared to deep graph-based systems.

Relies heavily on accurate extraction prompts; if the LLM fails to extract a fact, it isn't stored.

Pricing

The core framework is open-source and free. Mem0 also offers a managed cloud service with a generous free tier, while production API usage is billed based on requests and storage.

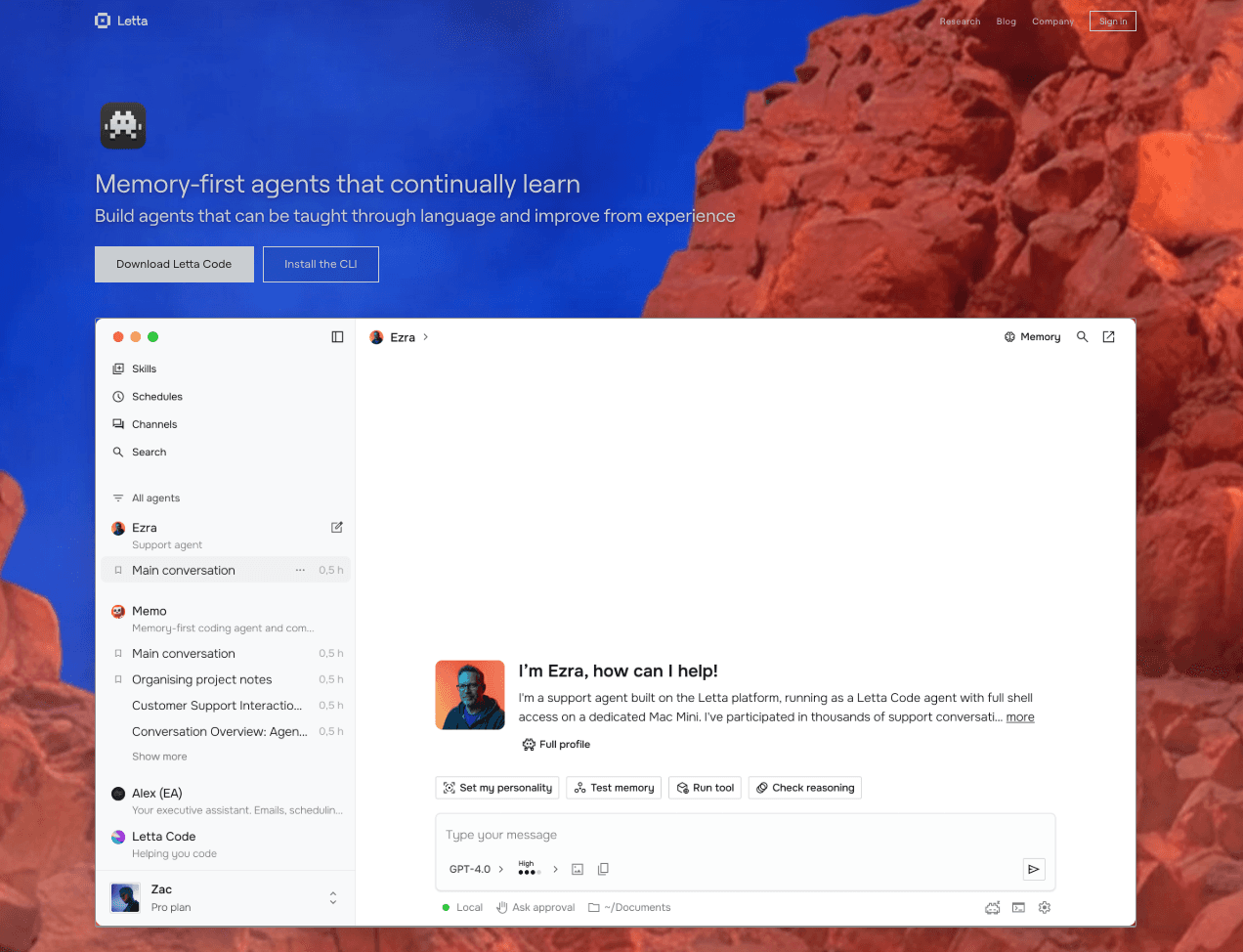

3. Letta

Letta emerged from the creators of the MemGPT research project. It functions as an advanced agent state management system, effectively giving AI agents an operating-system-like tiered memory architecture. Letta is designed for stateful runtimes, dividing memory into "core" (always in context) and "archival" (paged in when needed). For coding agents dealing with massive codebases, this tiered approach allows the agent to self-manage what codebase context it needs at any given moment.

Key Features

Tiered Memory System: Clear separation between active working memory and long-term archival storage.

Agentic Memory Management: The LLM itself decides when to read from or write to its long-term memory.

Stateful Execution: Maintains the exact state of the agent across interrupted sessions.

Pros

Highly academic and robust approach to context window management.

Excellent for infinite-context illusion, perfect for deeply complex, long-running coding tasks.

Open-source and highly transparent in its memory operations.

Cons

Steep learning curve; developers must adopt the MemGPT paradigm of agent orchestration.

High latency during memory paging operations if the agent decides to search archival memory frequently.

Pricing

Letta is open-source and free to use for self-hosting. Cloud-hosted or enterprise versions may have variable pricing based on usage and managed infrastructure.

4. Zep

Zep is a memory layer engineered specifically for low-latency AI applications. It focuses heavily on fast retrieval, temporal awareness, and automated summarization of chat histories and documents. When integrated into a coding agent, Zep acts as an invisible background process that continuously distills long debugging sessions into concise facts, ensuring the context window isn't bloated with failed code iterations.

Key Features

Automated Summarization: Continuously rolls up chat history into dense summaries.

Low Latency: Written in Go, optimized for incredibly fast read/write operations.

Temporal Context: Understands when facts were learned, which is vital for distinguishing outdated code from new code.

Pros

Extremely fast, adding minimal overhead to agent response times.

Reduces token costs by automatically compressing context windows.

Easy drop-in replacement for standard chat history memory.

Cons

Advanced knowledge graph features are largely reserved for their enterprise tier.

Less focused on multi-agent portability compared to infrastructure-first platforms.

Pricing

Zep offers an open-source Community Edition which is free to self-host. Zep Cloud offers a free tier for evaluation, with paid tiers based on user scaling and advanced graph capabilities.

5. LangMem

Developed by the team behind LangChain, LangMem is a framework-bound memory tool designed to manage long-term memory and state for agents built on LangGraph. It provides primitives for extracting, storing, and updating agent memories. For developers already fully committed to the LangChain ecosystem to build their coding workflows, LangMem is the most natural, native choice for adding cross-session persistence.

Key Features

LangGraph Integration: Deeply integrated into the LangChain and LangGraph orchestration logic.

Memory Update Loops: Built-in mechanisms to run background tasks that refine and consolidate memory.

State Management: Native handling of complex, multi-actor agent states.

Pros

Seamless developer experience if you are already using LangChain.

Strong abstractions for background memory consolidation.

Highly customizable memory schemas.

Cons

Highly coupled to the LangChain ecosystem; not ideal if you want a portable, framework-agnostic memory layer.

Can inherit the abstraction complexity and overhead often associated with LangChain.

Pricing

LangMem is an open-source project and free to use. However, managed deployments via LangSmith or related enterprise services will incur standard LangChain platform costs.

6. Cognee

Cognee is an open-source memory framework that uses graph databases to build conceptual understanding for LLMs. Instead of just relying on vector embeddings, Cognee structures information into relationships. For coding agents, Cognee is intriguing because it can theoretically map out a software architecture (e.g., "Function A calls Function B which relies on Library C") and store that relational memory persistently.

Key Features

Graph-Based Memory: Uses a knowledge graph backend to store explicit relationships.

Deterministic Retrieval: Reduces hallucinations by relying on structured graph paths rather than just probabilistic semantic search.

Modular Pipeline: Customizable ingestion pipelines for text and data.

Pros

Excellent for mapping complex, relational codebase architectures.

Highly deterministic, which is crucial for strict coding environments.

Completely open-source and flexible.

Cons

Setup is complex; requires understanding and managing graph database structures.

More experimental and research-oriented compared to plug-and-play APIs.

Pricing

Cognee is open-source and free to use. Costs are purely associated with the underlying infrastructure (e.g., Neo4j or other graph databases) you choose to host it on.

7. Graphiti

Graphiti is a specialized library developed by Zep designed to build temporal, dynamic knowledge graphs for AI agents. Unlike static graph databases, Graphiti focuses on how relationships change over time. In a coding agent context, this is powerful for tracking the evolution of a codebase—remembering not just what an API endpoint does today, but what it used to do and why it was deprecated.

Key Features

Temporal Edges: Tracks the birth, modification, and decay of relationships in the graph.

Episodic and Semantic Mix: Combines time-based event memory with factual conceptual memory.

Dynamic Updates: Automatically merges nodes and updates edges as new information arrives.

Pros

Brilliant for tracking evolving software projects and debugging histories over time.

Handles contradictory information well by relying on temporal timestamps.

Open-source Python library that is easy to experiment with.

Cons

Highly specialized; it handles the graph logic but requires you to wire up the rest of the agent architecture.

Can be resource-intensive to run dynamic node-merging at scale.

Pricing

Graphiti is available as an open-source library under permissive licensing, making it completely free to use, modify, and deploy in your own infrastructure.

8. Supermemory

Supermemory positions itself as an open-source "second brain" for AI. While it is slightly more consumer- and generic-data-focused than pure devtools, it is often evaluated by developers building personal coding assistants. It provides a visual interface to save snippets, bookmarks, and markdown files, which the AI can then retrieve. It's essentially a managed memory layer with a strong UI component.

Key Features

Visual Dashboard: Comes with a user-friendly UI to see exactly what the AI has remembered.

Multi-Source Ingestion: Easily digests web pages, code snippets, and standard text.

Open-Source Stack: Built on modern web technologies, making it easy to self-host.

Pros

Best-in-class user interface for managing and curating agent memory manually.

Very easy to deploy and use as a personal developer assistant's memory backend.

Open-source with a highly active community.

Cons

Lacks deep, native IDE integrations or complex multi-agent orchestration tools.

Better suited as a generic knowledge base than a strict, automated coding state manager.

Pricing

Supermemory is open-source and free to self-host. They offer hosted or premium tiers.

9. Qdrant

Qdrant is not a ready-made memory layer; it is a high-performance open-source vector database. However, it must be included in this comparison because a vast majority of developers initially attempt to build their coding agent's memory using a pure vector DB. Qdrant excels at similarity search and semantic retrieval, making it the foundational storage layer upon which many custom memory architectures are built.

Key Features

High-Speed Vector Search: Optimized for massive-scale semantic retrieval.

Rich Payload Filtering: Allows developers to attach metadata (like session IDs or file paths) and filter searches rigidly.

Rust-Based Architecture: Extremely fast and memory-efficient.

Pros

Ultimate control; you can design your memory retrieval logic exactly how you want.

Open-source and highly scalable from local environments to enterprise clusters.

Unmatched performance for pure RAG (Retrieval-Augmented Generation) workloads.

Cons

It is just the database. You must build the summarization, cross-session continuity, and memory synthesis logic yourself.

Lacks out-of-the-box cross-agent portability features.

Pricing

Qdrant offers a completely free open-source version for local and self-hosted use. Qdrant Cloud provides a managed free tier, with usage-based pricing for production scale.

10. Pinecone

Similar to Qdrant, Pinecone is a fully managed vector database rather than an out-of-the-box memory platform. We include it because it is one of the most popular tools developers use when bootstrapping long-term context for AI coding agents. Pinecone's serverless architecture means developers can dump code embeddings into the database without worrying about infrastructure provisioning.

Key Features

Serverless Architecture: No infrastructure to manage; highly scalable out of the box.

Real-Time Indexing: Codebase changes and chat logs are searchable almost instantly.

Broad Ecosystem: Integrates seamlessly with almost every AI framework and orchestration tool.

Pros

Incredibly easy to get started with zero infrastructure overhead.

Highly reliable for enterprise-grade retrieval architectures.

Great documentation and developer support.

Cons

It provides retrieval, not true "memory." It does not automatically handle state, updates, or forgetting.

Not open-source; you are tied to their managed cloud environment.

Pricing

Pinecone offers a generous free tier for developers starting out. Beyond that, it uses a serverless pricing model based on read/write operations and storage capacity.

Which Memory Layer Is Best for Different Use Cases?

Best Overall for Portable Governed Memory Infrastructure

If you are building complex AI dev tools or managing enterprise coding workflows, MemoryLake is the strongest choice. Its focus on cross-agent continuity, portability, and governance means it acts as a true infrastructure layer, not just a temporary context cache.

Best for Agentic OS Simulation and Stateful Runtimes

If your agent needs to self-manage massive amounts of codebase context and run continuously in the background, Letta offers the most advanced tiered memory architecture on the market.

Best for Framework-Native Developers

If your entire stack is already built on LangChain and LangGraph, LangMem is the path of least resistance, offering deep integration and native state management loops.

Best for Pure Vector Retrieval Backends

If you are a developer who prefers to build custom memory synthesis logic from scratch and just needs a blazing-fast storage engine, open-source Qdrant or managed Pinecone are the industry standards.

Memory Layer vs Vector Database vs Chat History

When evaluating the memory stack for coding workflows, it is crucial to understand the distinct layers of the ecosystem:

Chat History

What it is: The raw transcript of the conversation between the developer and the agent.

What it lacks: It is not durable. Once the context window fills up, older messages are dropped. It cannot seamlessly transfer from one session to another tomorrow.

Vector Database (e.g., Qdrant, Pinecone)

What it is: A specialized database that stores data as mathematical vectors, allowing for semantic search (finding code that is "conceptually similar").

What it lacks: A vector database is a storage and retrieval backend. It does not automatically know what to remember, how to summarize, or when to update outdated information. You must build that logic.

Memory Layer (e.g., MemoryLake, Mem0)

What it is: An intelligence layer sitting above the database. It handles the lifecycle of knowledge. A true memory layer automatically extracts facts, updates changing project states, persists context across different sessions, and allows memory to be ported across different AI models and tools.

Why coding agents need it: Long-term project collaboration requires selective reuse, structure, and persistence—not just blind semantic retrieval.

What to Look for in a Memory Layer for Coding Agents

When selecting a platform, developers should evaluate based on these criteria:

Persistent Memory: The system must survive the end of an IDE session or a CLI runtime.

Context Reuse Across Sessions: It should automatically inject your specific coding preferences (e.g., "always use strict TypeScript") without prompting.

Portability: If you switch from OpenAI to Anthropic, or from Cursor to a custom CLI agent, your memory should travel with you.

Governance and Control: Look for systems that allow you to inspect, edit, or delete specific memories (essential for handling sensitive API keys or deprecated code).

Cost Efficiency: A good memory layer synthesizes information, preventing you from repeatedly stuffing 100k+ token prompts, drastically lowering LLM API costs.

Conclusion

The demand for agentic developer workflows has outgrown simple chat histories and raw vector stores. To build truly helpful coding assistants, developers must adopt dedicated memory architectures that handle persistence, state updates, and context retrieval natively.

For developers seeking a fast, personalized experience, Mem0 and Zep are excellent drop-in open-source solutions. If you are building deeply stateful, continuous agents, Letta offers an unparalleled, albeit complex, OS-like paradigm.

However, if you need more than a basic vector store or a framework-locked plugin, MemoryLake is one of the strongest options to evaluate. Teams that want persistent, portable, and governed memory for coding agents should put MemoryLake near the top of their shortlist. If your coding workflows span multiple sessions, diverse developer tools, and varying agent runtimes, MemoryLake’s approach as a unified "memory passport" makes it a particularly compelling foundation for the future of AI development.

Frequently Asked Questions

What is a memory layer for coding agents?

A memory layer is an infrastructure component that allows AI agents to store, update, and retrieve context, user preferences, and project states persistently across multiple sessions and tasks, functioning as the agent's long-term brain.

Do coding agents really need long-term memory?

Yes. Without long-term memory, coding agents suffer from amnesia. Developers are forced to repeatedly explain project architecture, coding standards, and past debugging steps, which wastes time and skyrockets token costs.

What is the difference between a memory layer and a vector database?

A vector database simply stores and searches raw data embeddings. A memory layer is a higher-level system that manages the logic of memory: extracting insights from chats, updating deprecated facts, summarizing history, and orchestrating what context to provide the agent.

Is chat history enough for AI coding assistants?

No. Chat history is linear and limited by the LLM's context window. Once the limit is reached, older context is forgotten. It also does not persist if you close the application and start a new session a week later.