10 Best AI Memory Tools for Investment Analysts Working Across PDFs, Spreadsheets & Research Notes in 2026 (Tested & Compared)

Joy

Introduction

The modern investment analyst workflow in 2026 is defined by data overload. From digesting 10-K filings and earnings call transcripts to cross-referencing multi-tab spreadsheets and proprietary research notes, buy-side and sell-side professionals spend hours synthesizing information. While AI has revolutionized financial research, a critical bottleneck remains: AI forgets. If you are constantly re-uploading the same PDFs, re-explaining your investment thesis, or fighting context window limits, your workflow is fundamentally broken.

What is an AI memory tool, and why do investment analysts need one?

AI memory tools are persistent data layers that allow language models to retain facts, context, and historical reasoning across multiple sessions. For investment analysts, these tools eliminate the need to repeatedly upload PDFs, spreadsheets, and research notes. Instead of treating every prompt as a blank slate, a true AI memory tool builds a durable "second brain" that tracks evolving financial models, tracks ongoing SEC filings, and maintains cross-session continuity, dramatically reducing prompt stuffing and context window limitations.

Here is a comprehensive buyer's guide comparing the best AI memory tools, memory infrastructure platforms, and context-aware frameworks tailored for investment research workflows.

Quick Comparison of the 10 Best AI Memory Tools

Product | Best For | Category | Pricing |

Persistent, cross-session research | Memory Infrastructure | ||

Developer-focused app memory | API/Memory Layer | ||

Fast, low-latency assistant recall | Memory API | ||

Agentic, tiered memory management | OS/Framework | ||

Enterprise-wide AI search | Enterprise Search | Custom enterprise pricing | |

Personal bookmarking & notes | Personal AI | ||

Long-context single-platform analysis | Native Feature | ||

Lightweight chat continuity | Native Feature | ||

LangChain ecosystem builders | Framework | Open-source | |

Custom vector search builds | Vector Database |

1. MemoryLake

MemoryLake positions itself as a persistent AI memory infrastructure—a portable memory layer that acts as the "second brain" for AI systems. Rather than a simple chat add-on or a basic vector database, MemoryLake is designed to be the memory passport for agents and AI workflows. For investment analysts, it addresses the core pain point of cross-session continuity. According to MemoryLake’s public materials, it excels at managing file-heavy knowledge workflows (PDFs, spreadsheets, documents) while maintaining strict governance, traceability, and version-aware memory, making it an exceptionally strong fit for long-term investment research.

Key Features

Portable AI memory layer that works across different LLMs and agentic workflows.

Advanced multimodal and file-heavy ingestion for PDFs, spreadsheets, and research notes.

Built-in governance, provenance, and traceability for financial compliance.

Cross-session continuous memory that reduces prompt stuffing and repeated context rebuilding.

Version-aware memory tracking to monitor how an investment thesis evolves over time.

Pros

Provides genuine durable memory, separating long-term research context from immediate chat history.

Highly portable; analysts aren't locked into a single AI provider's ecosystem.

Strong emphasis on data governance and source traceability, which is critical for financial institutions.

Cons

As an infrastructure-grade tool, it may offer more capabilities than a solo user looking for a simple note-taking app might need.

Requires a slight paradigm shift to understand "memory as a portable layer" versus standard RAG.

Implementation in highly restrictive on-prem legacy banking systems may require custom configuration.

Pricing

MemoryLake uses a three-tier pricing model: free for testing, Pro at $19/month ($16/yearly), and Premium at $199/month ($166/yearly).

2. Mem0

Mem0 is a popular developer-centric memory layer designed to add personalized, persistent memory to AI applications. While primarily aimed at software engineers building AI tools, it provides a solid foundation for financial platforms needing to remember user preferences, previous queries, and interaction history. For an analyst workflow, Mem0 helps internal firm-built tools remember specific user contexts across sessions.

Key Features

Universal memory API for AI applications.

Automatic entity extraction and relationship mapping.

Cross-platform memory synchronization.

Adaptive personalization based on user interactions over time.

Developer-friendly SDKs (Python, TypeScript).

Pros

Excellent for development teams building bespoke internal investment research apps.

Automatically curates and updates user profiles and preferences.

Lightweight integration process.

Cons

Primarily an API for developers, not an out-of-the-box UI for non-technical analysts.

Lacks the deep file-heavy governance layer required for complex, multi-layered financial document workflows.

Focuses heavily on user personalization rather than rigorous thesis tracking.

Pricing

Mem0 offers a four-tiered pricing model: a free Hobby tier, a 19/month Starter plan, a highlighted 249/month Pro plan and a custom-priced Enterprise tier.

3. Zep

Zep is a long-term memory service built specifically for AI assistants and agents. It focuses heavily on low-latency recall and extracting facts, summaries, and conversational context from dialogue. In an investment context, if an analyst is using a voice or text-based AI assistant to brainstorm trades or recall past discussions about a specific asset, Zep operates in the background to ensure the assistant remains context-aware without latency spikes.

Key Features

Perpetual memory with automatic summarization.

Fact extraction and temporal tracking of information.

Extremely low-latency retrieval architecture.

Vector search combined with structured data recall.

Privacy-first design with local deployment options.

Pros

Lightning-fast recall speeds, ideal for real-time AI interactions.

Automatic summarization prevents the context window from bloating unnecessarily.

Fact extraction works well for pulling specific numbers discussed in previous chats.

Cons

Heavily optimized for conversational AI, meaning it is less focused on complex document-heavy tasks (like parsing 50-tab Excel models).

Like Mem0, it requires a developer to implement into an existing tool.

Less emphasis on portable memory across totally disjointed workflows.

Pricing

It provides predictable credit-based pricing: Flex at $125/month, Flex Plus at $375/month, and a custom Enterprise tier for large-scale needs.

4. Letta

Letta (the commercial platform behind the open-source MemGPT framework) approaches memory from an operating system perspective. It utilizes a tiered memory architecture—managing what is kept in "main memory" (the context window) versus "external storage" (disk). For an investment analyst, Letta provides a highly agentic approach, allowing AI systems to autonomously decide when to page in older research notes or SEC filings during a deep dive, mimicking how a human retrieves files from a filing cabinet.

Key Features

Tiered memory architecture inspired by traditional OS memory management.

Agentic self-editing capabilities (the AI decides what to remember and forget).

Infinite context illusion through intelligent data paging.

Open-source foundation (MemGPT).

Stateful AI management.

Pros

Fascinating autonomous memory management; the AI updates its own context intelligently.

Effectively solves the context window limitation for long, ongoing research tasks.

Highly customizable for complex agentic workflows.

Cons

Can be overly complex for standard retrieval tasks.

The autonomous nature of memory editing might pose traceability challenges if an analyst needs strict provenance on why a fact was altered.

Steep learning curve for implementation.

Pricing

Letta offers three paid plans: Pro ($20/month) for personal use, Max Lite ($100/month) for professionals, and Max ($200/month) for power users, each with increasing usage limits and features.

5. Glean

Glean is not a direct AI memory tool in the pure infrastructure sense; rather, it is an enterprise AI search and knowledge platform. However, because it securely connects to all of a company's internal apps (Google Drive, SharePoint, Jira, Slack), it functionally serves as a memory retrieval system for an entire organization. For portfolio research teams, Glean allows analysts to query internal proprietary data instantly, synthesizing insights across past analyst reports and spreadsheets without manual re-uploading.

Key Features

Deep integrations with over 100 enterprise SaaS applications.

Strict adherence to existing enterprise permission and governance models.

Centralized conversational AI interface grounded in internal knowledge.

Real-time indexing of company-wide data.

Pre-built AI templates for common enterprise queries.

Pros

Unmatched for retrieving existing company knowledge across diverse, siloed platforms.

World-class enterprise security and permission handling.

Ready to use out-of-the-box for non-technical analysts.

Cons

It is an enterprise search platform, not a portable memory layer for custom AI workflows.

Platform-bound; you operate within Glean's ecosystem rather than carrying memory to external agentic tools.

Heavy enterprise implementation process.

Pricing

Custom pricing based on seat count and enterprise scale (Contact sales).

6. Supermemory

Supermemory positions itself as a personal AI second brain. It allows users to bookmark web pages, upload PDFs, and save text snippets into a centralized, searchable AI canvas. For individual investment analysts or solo researchers, Supermemory is a highly accessible way to collect daily financial news, broker reports, and random research notes, turning scattered digital clutter into a coherent, queryable knowledge base.

Key Features

Browser extension for instant bookmarking and clipping.

Native canvas UI for visual organization.

AI chat interface grounded exclusively in user-saved content.

Support for PDFs, Markdown, and web links.

Privacy-focused individual knowledge management.

Pros

Extremely user-friendly with a beautiful, intuitive interface.

Great for solo analysts curating a personal feed of daily financial intelligence.

Zero technical setup required.

Cons

Lacks the infrastructure-level scale required for enterprise buy-side teams.

Not designed for complex integrations with custom financial models or agentic workflows.

Struggles with massive, multi-gigabyte document corpora compared to enterprise platforms.

Pricing

Supermemory offers four pricing tiers: Free at $0/month, Pro at $19/month, Scale at $399/month, and custom Enterprise plans.

7. Claude Projects

Claude Projects is a native feature within Anthropic’s Claude ecosystem that leverages Claude’s massive 200k+ token context window to create isolated workspaces. While technically a "long-context workflow" rather than persistent memory infrastructure, it solves a similar problem for analysts. By uploading foundational PDFs, company filings, and research notes into a Project, the analyst ensures Claude always has that background context for any new chat started within that workspace.

Key Features

Project-specific knowledge bases (upload up to 200k tokens of documents).

Custom instructions specific to the workspace.

Artifacts feature for side-by-side document generation and code editing.

Seamless handling of dense, text-heavy PDFs.

Zero configuration required.

Pros

Anthropic’s models are exceptionally good at nuanced financial analysis and reading comprehension.

Incredibly easy to use; just drag and drop files to build the baseline context.

Artifacts UI is excellent for iterating on financial models or report drafts.

Cons

It is not persistent across different platforms; you are completely locked into the Anthropic ecosystem.

No true "memory update" over time—it relies entirely on static document uploads and brute-forcing the context window.

Subject to platform message limits based on context size.

Pricing

Included with Claude Pro ($20/user/month) and Claude for Enterprise (Custom pricing).

8. ChatGPT Memory

OpenAI introduced ChatGPT Memory to allow its standard consumer and enterprise chat interfaces to remember details across all chats. For a financial analyst, this means ChatGPT can remember your firm's formatting preferences, the specific tickers you cover, or the stylistic tone of your research notes. It is a lightweight, out-of-the-box solution for continuity, though it is bound strictly to the ChatGPT interface.

Key Features

Automatic, implicit memory retention based on conversation cues.

Explicit memory commands (e.g., "Remember that I only cover the semiconductor sector").

Memory management UI to review and delete stored facts.

Cross-device synchronization (web to mobile).

Privacy controls to disable memory for temporary chats.

Pros

Zero setup required; it happens naturally in the background.

Highly effective for stylistic preferences and basic user facts.

Native to the most widely used LLM interface.

Cons

Far too basic for rigorous investment workflows; it won't reliably parse and remember the intricacies of a 100-page 10-K across sessions.

Platform-locked to OpenAI.

Lacks provenance—you cannot easily trace where a specific generated memory originated from in your research notes.

Pricing

ChatGPT provides several personal subscription tiers: a free plan, Go at $8 per month, Plus at $20 per month with a free trial, and Pro starting from $100 monthly.

9. LangMem

LangMem is an architectural approach and toolset provided by the LangChain ecosystem. It provides developers with the primitives needed to build stateful, long-term memory into LangChain-powered applications. If an investment bank's internal engineering team is building a custom RAG application for equity analysts, LangMem provides the framework to programmatically extract, store, and inject memories into the LLM chain.

Key Features

Deep integration with the LangChain and LangGraph ecosystems.

Programmatic control over memory extraction and storage.

Tools for summarizing and consolidating memories over time.

Compatible with various vector stores and databases.

Agent-state persistence.

Pros

Ultimate flexibility for developers already building in LangChain.

Allows for highly customized memory routing specific to niche financial use cases.

Open-ecosystem support.

Cons

Strictly a developer framework, offering zero utility for an analyst without an engineering team.

Requires significant custom logic to handle complex document versioning and file-heavy ingestion cleanly.

Overhead of maintaining the memory logic falls entirely on the internal team.

Pricing

Open-source; costs are associated with the underlying infrastructure and LangSmith (usage-based).

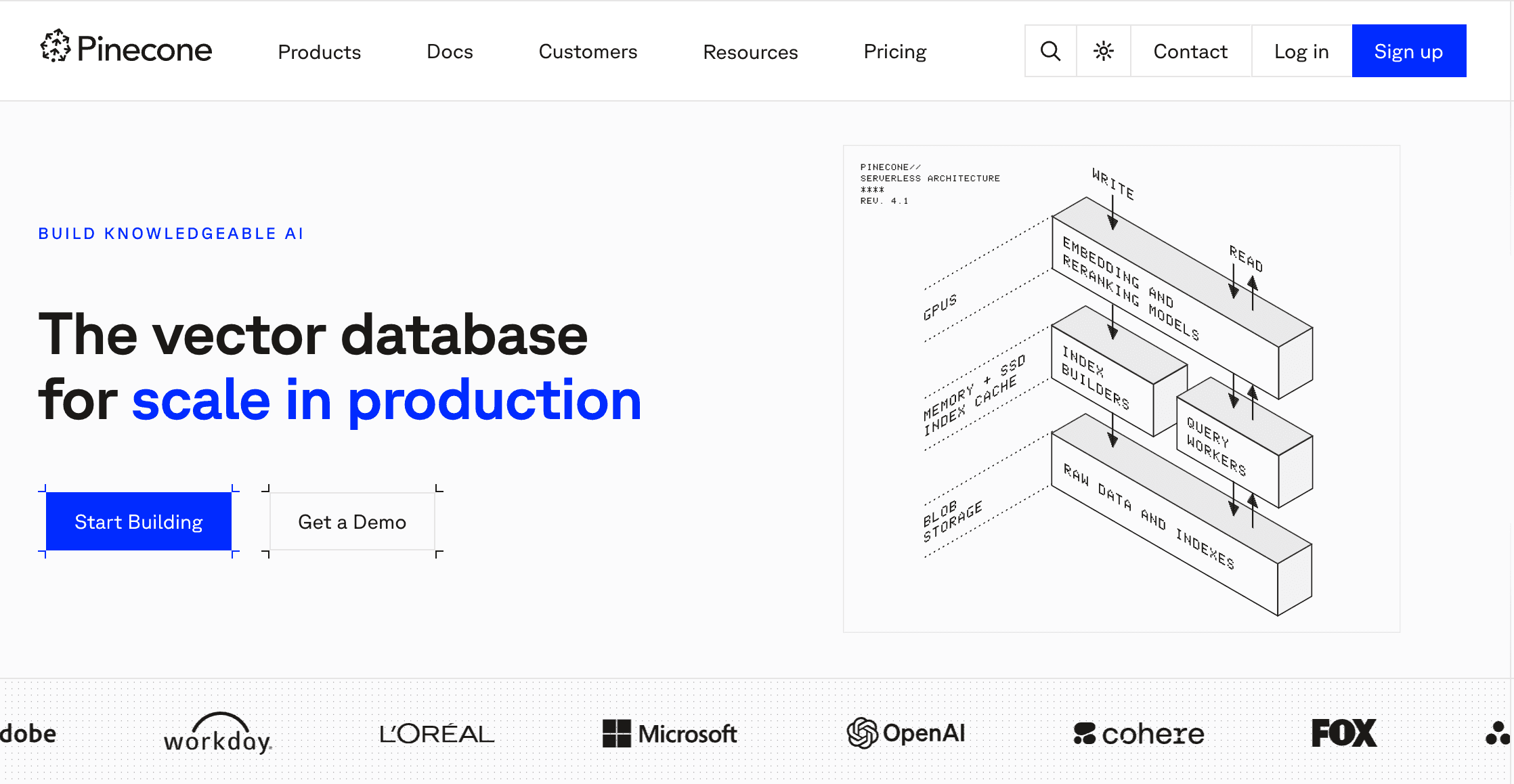

10. Pinecone

Pinecone is not an AI memory tool in the user-facing sense; it is a fully managed vector database. We include it in this comparison because many teams mistakenly assume they only need a vector database to solve the "memory" problem. While Pinecone is exceptional at storing and retrieving high-dimensional vector embeddings, it lacks the out-of-the-box application logic, provenance routing, and continuous memory features required by an analyst. It is core infrastructure, meant to be built upon.

Key Features

Serverless vector database architecture.

Ultra-fast search across billions of embeddings.

Real-time index updates.

Hybrid search capabilities (combining keyword and vector search).

Enterprise-grade security and scale.

Pros

Unmatched scalability for massive institutional data sets.

Incredibly fast retrieval times.

Seamless integration with almost every AI developer framework.

Cons

It is raw infrastructure. It provides retrieval, not "memory logic," "governance workflows," or UI.

Requires significant engineering resources to build an analyst-friendly application on top of it.

Does not inherently track cross-session continuous reasoning without custom logic.

Pricing

Pinecone offers a free tier, a 50/month Starter plan, a 500/month Pro plan, and custom enterprise pricing.

Best Picks by Use Case

For Persistent, Cross-Platform Analyst Workflows: MemoryLake. If your workflow requires true durable memory, heavy document handling (PDFs/spreadsheets), and strict provenance that travels across tools, MemoryLake stands out as the premium infrastructure choice.

For Enterprise Internal Search: Glean. If the primary goal is finding internal documents across SharePoint and Google Drive securely, Glean is unmatched.

For Solo Analysts: Supermemory. If you just need a personal, easy-to-use second brain to bookmark articles and clip research notes.

For Single-Platform Long Context: Claude Projects. If you don't need portable memory and are happy to upload a static batch of PDFs into one workspace to leverage Anthropic's reasoning capabilities.

Why Investment Analysts Need AI Memory Tools

For equity analysts, portfolio managers, and VC/PE research teams, knowledge is cumulative. However, standard LLM chat interfaces are episodic. Investment research workflows demand more than simple conversational AI for several reasons:

Cross-Session Continuity: An analyst might track a single ticker over several quarters. Standard AI forgets last quarter's thesis as soon as the chat window closes. Memory tools maintain this ongoing context.

Heavy File Workflows: Financial research relies on massive files—hundreds of pages of PDFs, complex Excel models, and scattered research notes. Constantly re-uploading them consumes time and quickly maxes out context limits.

Reduction of Prompt Stuffing: Without memory, analysts must write massive prompts restating their firm's investment criteria, historical findings, and compliance guidelines every single time.

Durable Research Memory: Investment decisions require traceability. You need to know why an AI generated a specific insight and which earnings transcript it pulled from. True memory tools provide provenance.

What to Look for in an AI Memory Tool for PDFs, Spreadsheets, and Research Notes

Not all memory tools are created equal. When evaluating platforms for financial workflows, look for these critical capabilities:

File-Heavy Knowledge Handling: The tool must seamlessly ingest and recall data from complex formats like multi-sheet financial spreadsheets and dense regulatory PDFs.

Portability: Can you carry your memory across different tools and models (e.g., from GPT-4 to Claude 3.5 Sonnet to local models)? A portable memory passport is highly valuable.

Governance and Traceability: Financial institutions require strict compliance. The tool must offer provenance (knowing the exact source document of a memory) and data governance.

Persistent vs. In-Context Memory: Look for tools that act as persistent infrastructure, intelligently routing relevant historical data to the AI without overflowing the immediate context window.

AI Memory Tools vs Chat History, RAG, and Vector Search

Investment analysts often encounter confusing terminology. Here is how AI memory tools differ from other technologies:

Chat History vs. AI Memory: Chat history is simply appending previous messages to the current prompt until the context window crashes. AI Memory selectively extracts facts, updates states, and injects only relevant context, ensuring long-term continuity without token bloat.

Vector Search / Databases vs. AI Memory: A vector database (like Pinecone) is a storage layer. It excels at similarity search but doesn't understand "contextual state." AI memory infrastructure provides the reasoning layer on top of storage, deciding what to remember, when to update it, and how it connects to the user's ongoing workflow.

RAG (Retrieval-Augmented Generation) vs. AI Memory: Traditional RAG retrieves static documents based on a query. AI memory goes further by tracking the evolution of your analysis, maintaining a continuous, version-aware thread of your research across multiple sessions.

Conclusion

The role of the investment analyst in 2026 is no longer about gathering data; it is about maintaining a cohesive narrative across thousands of fragmented data points. Relying on basic chat interfaces that forget your thesis the moment the session ends is an inefficient use of time. While simple chat history or basic RAG frameworks might suffice for lightweight queries, serious financial research demands durable, scalable memory.

If your workflow has outgrown chat history and repeated prompt stuffing, MemoryLake is worth a closer look. For analysts dealing with ongoing research across PDFs, spreadsheets, and notes, MemoryLake stands out as a particularly strong option. By offering persistent, governed, and reusable AI memory that tracks your complex files across sessions, teams that need true memory infrastructure will find MemoryLake especially compelling. Assessing your firm's need for portability, file ingestion, and compliance will ultimately guide you to the right choice for your research stack.

Frequently Asked Questions

What is an AI memory tool?

An AI memory tool is an infrastructure or application layer that allows artificial intelligence models to persist facts, user preferences, historical interactions, and document knowledge across separate sessions, functioning as a continuous "second brain."

Why do investment analysts need AI memory tools?

Analysts handle massive volumes of PDFs, 10-Ks, and spreadsheets. Without memory tools, they face context window limits and must repeatedly upload the same files or prompt-stuff the AI with historical thesis backgrounds. Memory tools provide continuity for long-term research.

Can AI memory tools handle PDFs and spreadsheets?

Yes, the more advanced memory infrastructure platforms, like MemoryLake, are specifically designed to ingest file-heavy knowledge workflows, parsing dense financial documents and spreadsheets into reusable memory blocks.

Are AI memory tools better than vector databases or RAG for analysts?

Yes, for workflow continuity. While RAG and vector databases are excellent for static document retrieval, AI memory tools add stateful continuity, tracking how an analyst's research and thesis evolve over time rather than just performing one-off searches.

Which AI memory tool is best for long-term investment research?

For teams that require governance, portability, and the ability to maintain long-term research context across multiple tools and heavy document sets, memory infrastructure platforms like MemoryLake are highly recommended.