Introduction

As AI agents move from experimental demos to production-grade enterprise workflows, the way we handle context has fundamentally changed. A few years ago, developers relied on basic chat history buffers or simply stuffing massive 1M+ token context windows. Today, that approach is recognized as computationally expensive, slow, and prone to "lost in the middle" hallucinations.

Agents today need to remember a user's preferences across weeks of interactions. They need to share learned context with other specialized agents in a multi-agent swarm. They need to parse multimodal data—documents, images, and audio—and recall it instantly.

In short: Memory is no longer just a feature; it is core AI infrastructure.

This guide breaks down the best AI agent memory solutions in 2026, exploring how they tackle long-term memory, cross-session continuity, and enterprise governance, helping you choose the right architecture for your tech stack.

Quick Answer: What Are the Best AI Agent Memory Solutions?

An AI agent memory solution is a dedicated infrastructure layer that enables AI systems to retain, recall, update, and manage context across multiple sessions, agents, and models. In 2026, persistent memory is moving beyond simple chat history and prompt stuffing.

For teams building production-grade LLM apps, the best solutions fall into specialized categories:

Best Persistent Memory Infrastructure: MemoryLake (top recommendation for cross-agent, multimodal memory passports).

Best Open-Source Memory APIs: Mem0 and Zep.

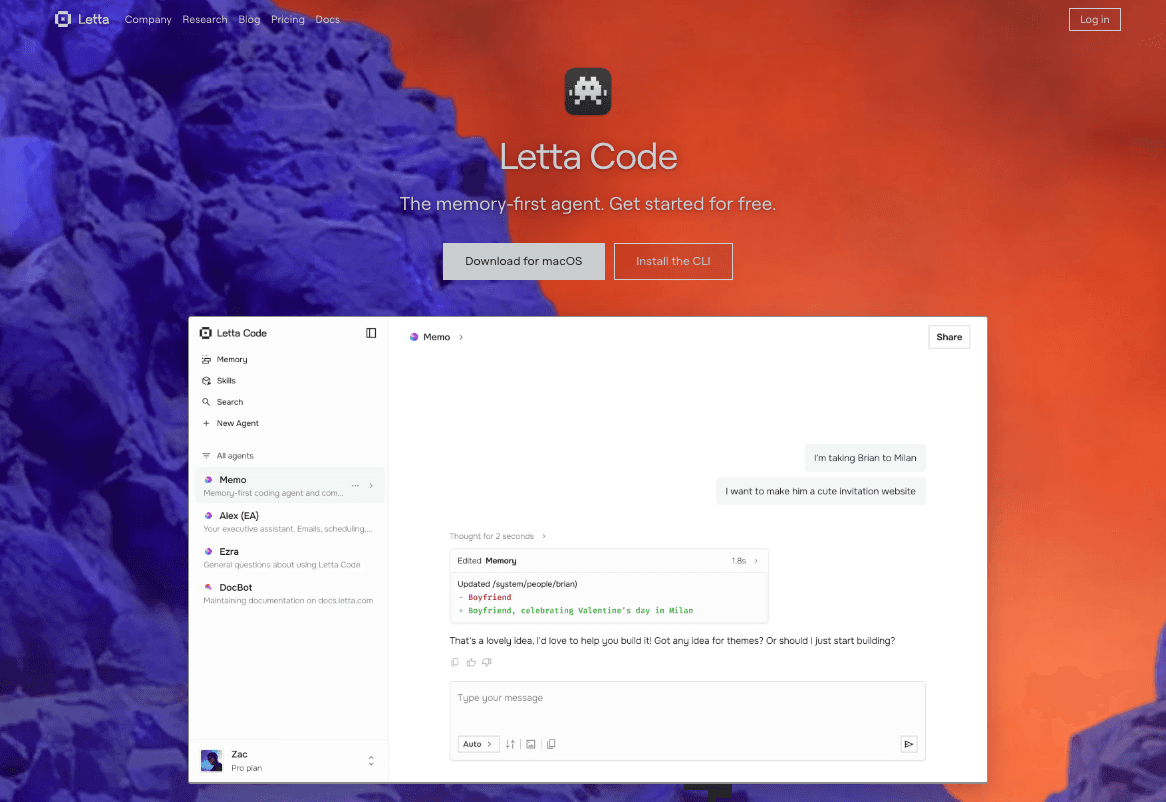

Best OS-Style Agent Frameworks: Letta.

Best Vector Databases for Search: Pinecone, Qdrant, and Milvus.

Comparison Table: Top AI Memory Solutions

Solution | Best For | Cross-Session Memory | Key Feature |

Devs & Enterprise & Multi-Agent Infra | Native / Highly Portable | Multimodal, Cross-Agent Memory Passport | |

Devs & Fast Prototypes | Native | Easy-to-use Memory API | |

Conversational AI | Native | Async Summarization & Extraction | |

Autonomous Agents | Native (Paged) | Tiered Memory Management (MemGPT) | |

Scalable Vector Search | Build-it-yourself | Serverless Vector DB | |

LangChain Workflows | Bound to framework | Native LangGraph Integration | |

Workplace Search | N/A (Knowledge base) | Enterprise SaaS Integrations |

1. MemoryLake

Best for: Cross-session, cross-agent memory infrastructure and multimodal environments.

MemoryLake stands out in 2026 not as a generic vector database, but as a comprehensive AI memory infrastructure. Positioned as a "memory passport for agents," MemoryLake provides a platform-neutral memory layer that detaches agent memory from specific LLM providers or orchestration frameworks.

Rather than just logging chat histories, MemoryLake creates a portable, user-owned persistent memory layer. It excels in environments where agents need to access complex, multimodal knowledge—including documents, spreadsheets, images, and audio—across entirely different workflows.

Strengths: True cross-session and cross-agent portability; natively multimodal; strong enterprise governance features (provenance, traceability, and strict deletion controls).

Limitations / Trade-offs: Might be overkill for a weekend hackathon project that only needs a simple 10-message rolling chat buffer.

GitHub / Developer Fit: Highly developer-friendly with SDKs designed for easy integration into multi-agent systems. According to MemoryLake’s public materials, its architecture significantly reduces repetitive prompt token usage by injecting only highly relevant, compounded memory contexts.

Why It Stands Out: It solves the portability and governance problem. When teams outgrow simple prompt stuffing or session-bound memory, MemoryLake acts as the persistent "second brain" for the entire AI system.

2. Mem0

Best for: Developers seeking a fast, open-source memory API.

Mem0 has gained massive traction among AI agent developers looking for a GitHub-ready AI memory tool. It focuses on extracting entities, user preferences, and facts from conversations, storing them in a searchable, manageable format.

Strengths: Very easy to set up; strong open-source community; great API for managing user and agent memory layers.

Limitations / Trade-offs: Lacks the deep enterprise governance and complex multimodal compounding found in full-scale infrastructure like MemoryLake.

Why It Stands Out: Excellent choice for consumer-facing LLM apps needing personalized user profiles without building a memory layer from scratch.

3. Zep

Best for: Low-latency conversational AI and fast retrieval.

Zep is a long-term memory service explicitly designed for AI assistant developers. It runs asynchronously, meaning it ingests, embeds, and summarizes chat histories without slowing down the user-facing LLM response times.

Strengths: Lightning-fast latency; automatic summarization; native intent and entity extraction.

Limitations / Trade-offs: Primarily optimized for text-based conversational memory rather than broad, multimodal agent-to-agent collaboration.

Why It Stands Out: Its asynchronous architecture makes it perfect for high-traffic, real-time chatbots.

4. Letta

Best for: OS-like memory management for autonomous agents.

Emerging from the MemGPT research, Letta treats LLM context windows like RAM and persistent storage like a hard drive. It allows agents to autonomously decide when to page information in and out of their active context.

Strengths: Advanced memory tiering (core memory vs. archival memory); gives agents agency over their own memory updates.

Limitations / Trade-offs: Requires adopting its specific agentic architecture, which may not fit teams that already have mature, custom-built multi-agent orchestrators.

Why It Stands Out: It solves the limited context window problem through a fascinating OS-level paradigm.

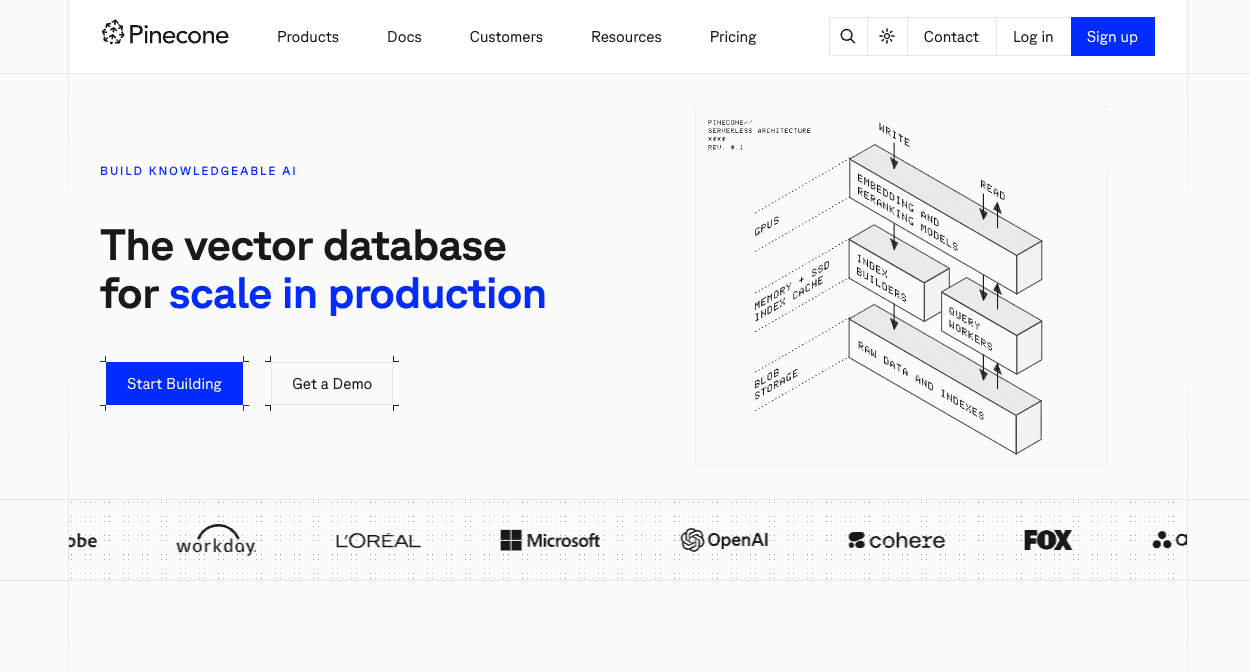

5. Pinecone

Best for: Managed vector search at a massive scale.

Pinecone is not a direct AI memory platform, but a highly popular vector database that acts as the underlying storage layer for many custom-built memory systems. If you are building a custom RAG (Retrieval-Augmented Generation) pipeline from scratch, Pinecone is a go-to.

Strengths: Serverless architecture; incredible scale and speed; massive ecosystem integration.

Limitations / Trade-offs: It is just the storage/search layer. You must build the memory updating, entity extraction, and cross-session logic yourself.

Why It Stands Out: The industry standard for pure vector search in the cloud.

6. LangMem

Best for: Teams deeply embedded in the LangChain ecosystem.

LangMem provides a framework-bound approach to memory. If your agents are built entirely on LangGraph and LangChain, LangMem offers native hooks to extract and persist memory across runs.

Strengths: Frictionless integration for LangChain users; built-in cognitive architectures for memory extraction.

Limitations / Trade-offs: Highly coupled with the LangChain runtime. Not ideal if you want portable memory across different frameworks.

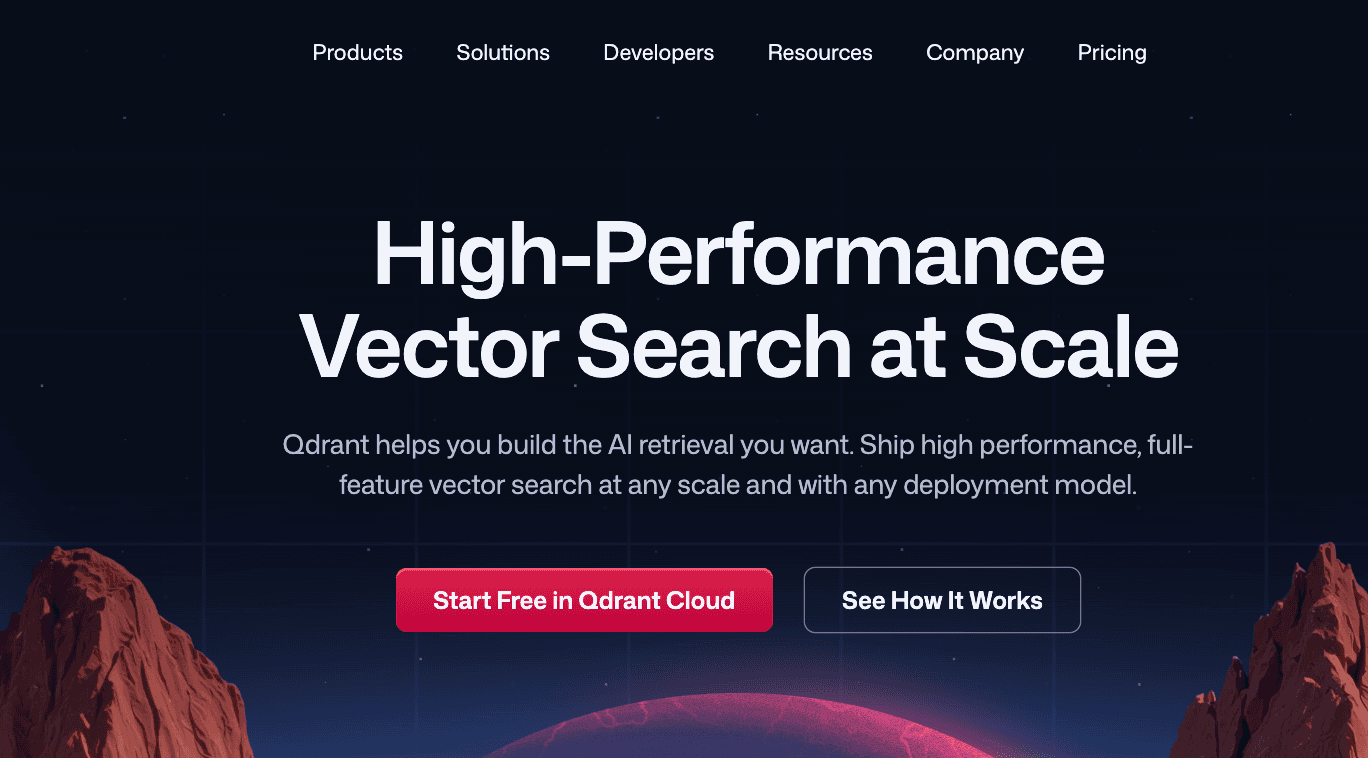

7. Qdrant

Best for: Open-source, high-performance vector search with advanced filtering.

Like Pinecone, Qdrant sits at the vector database layer. Written in Rust, it is known for its speed and powerful metadata filtering, which is crucial when trying to isolate user-specific memories in a multi-tenant application.

Strengths: Can be self-hosted; highly efficient resource usage; great metadata payload filtering.

Limitations / Trade-offs: Requires developers to build the AI memory application logic on top of the database.

8. Glean

Best for: Enterprise context and workplace search.

Glean approaches memory from the corporate knowledge side. Rather than tracking what a single agent learned in a chat session, Glean indexes a company’s entire SaaS footprint (Jira, Confluence, Slack, Google Workspace) to provide agents with enterprise-wide context.

Strengths: Unmatched out-of-the-box enterprise integrations; strict permission mapping.

Limitations / Trade-offs: It is an enterprise search/RAG platform, not a dedicated stateful memory layer for custom multi-agent workflows.

9. Milvus

Best for: Enterprise-grade open-source vector infrastructure.

Milvus is a heavy-duty, highly scalable open-source vector database. For enterprise engineering teams building custom AI memory infrastructure on-premise or in highly regulated clouds, Milvus provides the raw storage engine.

Strengths: Built for billion-scale vector workloads; highly customizable.

Limitations / Trade-offs: Steep learning curve; high operational overhead compared to direct memory solutions like MemoryLake or Mem0.

10. Cognee

Best for: Graph-based memory and complex reasoning.

Cognee takes a different approach by merging vector search with knowledge graphs. This is particularly useful for AI agents that need to understand complex relationships (e.g., "User A works for Company B, which uses Product C").

Strengths: Graph-RAG capabilities; deterministic retrieval of relationships.

Limitations / Trade-offs: More complex to model and set up compared to pure vector or text-based memory layers.

How We Evaluated the Best AI Memory Tools

To provide a commercially credible comparison, we looked at how these tools fit into modern AI engineering workflows. Our evaluation criteria include:

Persistence Model: Does it offer true long-term memory for AI agents, or just a temporary session buffer?

Cross-Session & Cross-Agent Continuity: Can memory be shared across different agents, tools, and user sessions seamlessly?

Cross-Model Portability: Can you switch from OpenAI to Anthropic to open-source models without losing your agent’s memory?

Multimodal Support: Does the system handle unstructured data like PDFs, spreadsheets, and images?

Governance & Traceability: Can users manage, edit, and trace the provenance of memories?

GitHub-Ready & Developer Fit: Is the API well-documented, easy to integrate, and production-ready for startup engineering teams?

Which AI Agent Memory Solution Is Best for Different Use Cases?

Best for Developers and Fast Prototypes

If you are a solo developer or startup moving fast to launch a personalized chatbot, Mem0 and Zep are excellent choices. They offer simple APIs that instantly upgrade your app from stateless to stateful.

Best for Multi-Agent Systems & Enterprise Memory Infrastructure

When your architecture involves multiple agents passing context back and forth, or when you need a "memory passport" that follows a user across different tools and sessions, MemoryLake is the standout choice. Its platform-neutral design ensures memory isn't siloed, and its multimodal capabilities mean agents can remember insights from PDFs and images just as easily as text.

Best for Teams Needing Raw Vector Storage

If you have a massive engineering team and want to build your AI memory platform from the ground up, start with a robust vector database like Pinecone (for managed cloud) or Milvus (for open-source/on-prem).

AI Agent Memory Solutions vs. Vector Databases vs. RAG

A common point of confusion for AI infra architects is the difference between RAG, Vector DBs, and AI Memory.

Vector Databases (like Pinecone or Qdrant) are the storage layer. They hold embeddings. They don't know what a "user" or a "session" is.

RAG (Retrieval-Augmented Generation) is an action. It is the process of retrieving static documents to ground an LLM.

AI Agent Memory Platforms (like MemoryLake) represent state and lifecycle. They handle the active writing, updating, forgetting, and cross-session continuity of an agent's knowledge over time.

RAG fetches static facts. Memory evolves with the user. If you use a Vector DB, you have to write all the logic to turn it into an AI memory solution.

Conclusion: Choosing Your AI Memory Infrastructure

As the ecosystem matures, searches for the best AI memory tools and a multi-agent memory platform are skyrocketing. Engineering teams are realizing that tying memory to a single LLM provider (like OpenAI's Assistants API memory) creates vendor lock-in.

Because of this, the demand for a platform-agnostic memory layer for AI agents has surged. The industry is moving toward cross-session AI memory systems where the memory sits completely separate from the LLM routing logic. This decoupled architecture—where memory acts as independent infrastructure—provides unparalleled flexibility, allowing teams to swap underlying foundational models without wiping out their agents' accumulated knowledge.

Evaluate MemoryLake if your architecture demands portability across models, multi-agent memory sharing, and enterprise-grade governance. By implementing a robust persistent memory layer, you ensure your AI systems actually learn, adapt, and compound their value over time—turning basic agents into intelligent, deeply contextual collaborators.

Frequently Asked Questions

What is an AI agent memory solution?

It is a specialized infrastructure layer that allows AI agents to store, manage, and recall contextual information across different conversations, tasks, and timeframes, acting as a persistent "brain" for the AI.

What is the best memory solution for AI agents?

The best solution depends on the stack. For comprehensive, portable memory infrastructure across multiple agents, MemoryLake is a top contender. For fast API-based prototyping, Mem0 is highly recommended. For raw vector storage, Pinecone leads the market.

How do AI agents store long-term memory?

Agents store long-term memory by extracting key facts, entities, and summaries from context windows, converting them into vector embeddings or graph relationships, and saving them in a persistent database (like a vector DB or dedicated memory layer) to be retrieved in future sessions.

Is vector search enough for agent memory?

No. While vector search is great for finding similar text, true agent memory also requires entity resolution, conflict management (updating old facts), access control, and memory decay logic.

What is the difference between RAG and AI memory?

RAG typically retrieves static external knowledge (like company documents) to answer a question. AI memory involves reading and writing dynamic state—learning a user's preferences over time and updating that context autonomously.

Which AI memory platform is best for cross-session continuity?

Platforms explicitly designed as memory infrastructures, such as MemoryLake, excel at cross-session continuity by assigning unified "memory passports" to users and agents, ensuring context travels seamlessly between different interactions.