In early 2026, I find myself analyzing the AI landscape through a very different lens than just a year ago. The conversation is no longer centered on “Which base model is smarter?” Instead, the real question has become: Who can operationalize autonomy at scale?

This shift—what I call the Post-OpenClaw (Clawdbot) Era—marks a structural transition in AI development. After testing multiple data workflows and tracking milestone markets in real time using Powerdrill Bloom, one pattern has become increasingly clear: investor outcomes in 2026 will be driven less by model branding and more by agent execution, governance infrastructure, and distribution control.

Below, I outline four core predictions shaping AI development in 2026—supported by probability assessments, market dynamics, and operational signals.

1. Agentic Tool Stacks Will Outcompete Raw Model Branding

From “Best Model” to “Best Execution”

For years, the competitive moat in AI revolved around benchmark performance. In 2026, that moat shifts decisively toward agentic tool stacks—systems that can plan, retrieve, execute tools, browse, write code, monitor tasks, and complete multi-step objectives reliably.

The market is moving from:

“Which model has the highest benchmark score?”

to:

“Which system can complete real-world workflows with minimal failure?”

Always-on agents—similar to Clawdbot-style architectures—normalize background tasks, scheduled monitoring, and automated escalation. Buyers increasingly evaluate:

Uptime reliability

Task success rate

Tool integration depth

Policy compliance

Return on automation

Raw model deltas matter less when execution reliability becomes the bottleneck.

Probability Assessment: 70%

Agents are already the practical interface where value is realized. Distribution and workflow integration are harder to displace than incremental model improvements. That makes this shift highly probable.

2. 2026 Becomes the Year of Workflow Governance

Agents as Semi-Autonomous Operators

As autonomy increases, enterprises begin treating agents not as chat assistants—but as semi-autonomous operators interacting with core systems.

This creates a new mandatory layer: workflow governance.

Enterprises now require:

Tool permission controls

Allowlisted integrations

Data boundary enforcement

Immutable execution logs

Audit trails across providers

Spending naturally follows risk. Once agents touch sensitive infrastructure, compliance and auditability budgets accelerate.

The Platform Wedge

Vendors that standardize:

Agent observability

Cross-model auditing

Policy enforcement

Explainability of actions

will capture disproportionate platform leverage.

Governance becomes not a feature—but a category.

Probability Assessment: 75%

As autonomy expands, governance becomes non-optional. Risk exposure drives procurement decisions. Budget flows toward control layers once agents operate beyond sandbox environments.

3. Model Release Timing Becomes a Volatility Engine

Capability Matters — Timing Moves Markets

In 2026, model releases remain frequent. However, what moves capital in the short term is not raw capability—it is release timing expectations.

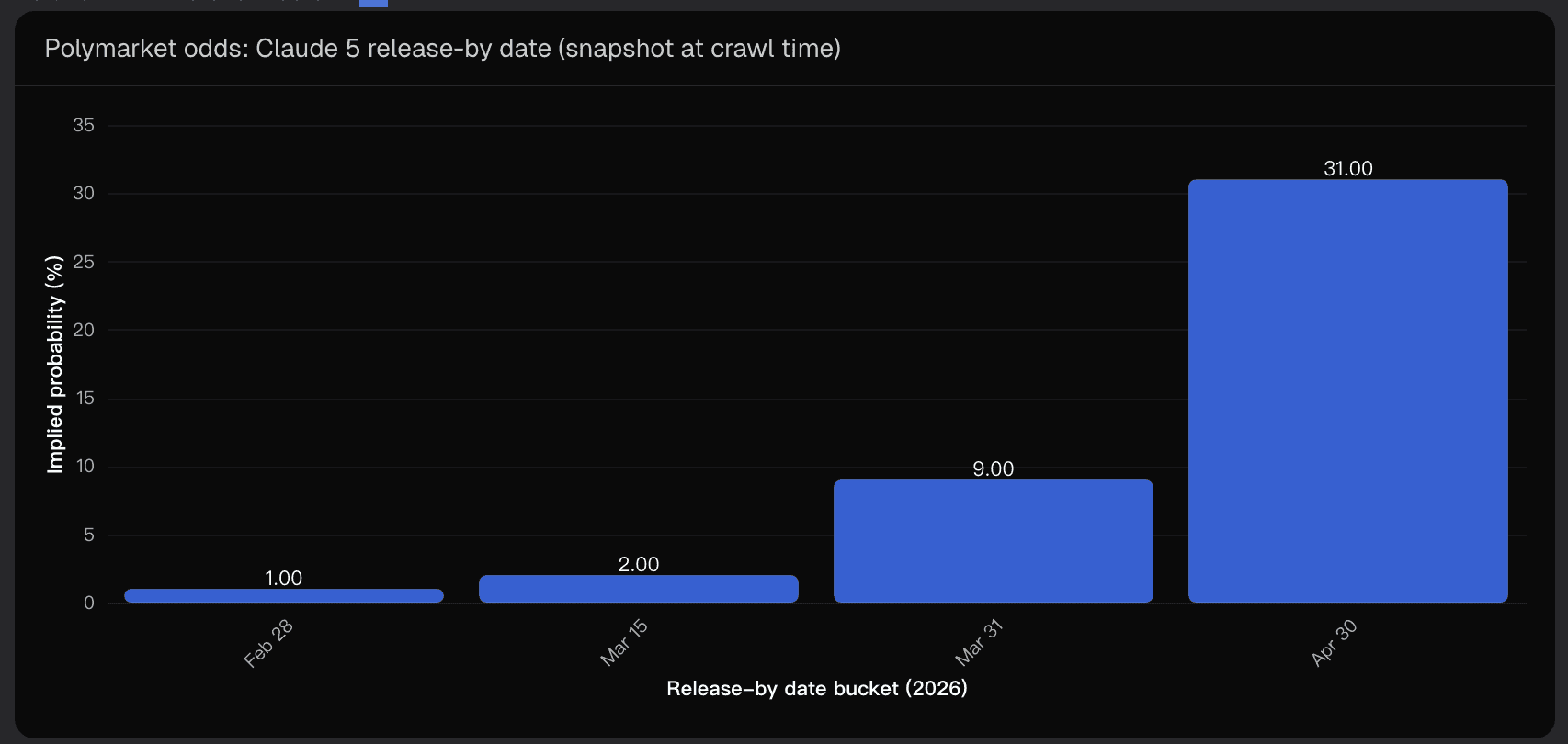

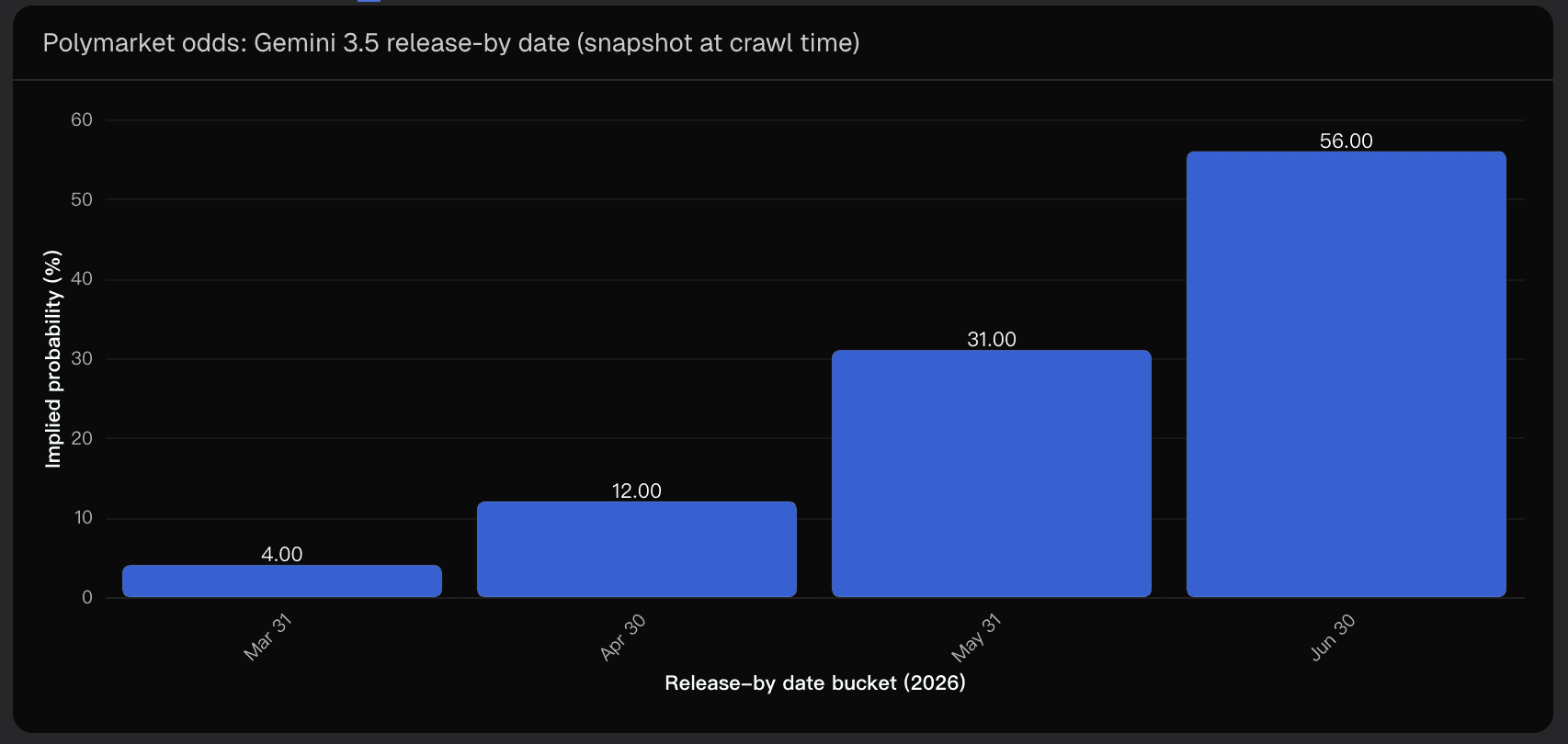

Milestone markets tracking releases like Claude 5 and Gemini 3.5 increasingly function as real-time expectation indices.

When probabilities compress sharply, capital rotates across:

Chip manufacturers

Inference infrastructure

Application layers

Private fundraising rounds

Market Signals from Milestone Pricing

Recent milestone market behavior shows:

Clustering of probability around mid-2026 release windows for Gemini 3.5

Early-to-mid 2026 concentration for Claude 5, but with meaningful uncertainty

Historical cases where frontier releases converged to near-certainty once decisive signals emerged

The pattern is clear:

Expectation repricing often precedes narrative shifts.

This creates tradable sentiment cycles across the AI stack.

Probability Assessment: 65%

Participation and liquidity in milestone markets continue to expand. As they mature, repricing events increasingly shape media attention and investor allocation behavior.

4. Capital Rotates Toward Cost-Efficient Agent Infrastructure

The Real Bottleneck: Operational Cost

As agents execute more steps and integrate more tools, cost drivers shift from token pricing to:

Tool-call overhead

Verification loops

Fallback routing

Human-in-the-loop exception handling

Latency-to-completion

The metric that matters most in 2026 is no longer “cost per token.”

It becomes: Cost per successful task.

Infrastructure providers that reduce:

Failure rates

Retry loops

Latency

Governance friction

will command premium multiples.

Reliability > Cheap Tokens

Investors increasingly reward systems that demonstrate measurable improvements in:

End-to-end task success

Autonomous workflow uptime

Operational efficiency at scale

Probability Assessment: 60%

Cost and reliability bottlenecks are operational realities today. However, efficiency gains may partially be absorbed by expanding agent complexity, limiting net margin improvements.

Key Risks and Uncertainty Factors

No forecast is complete without a structured risk register. Several uncertainty variables could materially alter outcomes:

1. Definition Risk in Milestone Markets

Ambiguity around what qualifies as a “release” (beta vs GA, API-only vs consumer launch) can distort probability interpretation.

2. Information Asymmetry & Reflexivity

Odds movements can influence discourse, which in turn influences trading behavior—sometimes without new fundamentals.

3. Capability vs Productization Gap

A model may launch on schedule but fail to deliver real workflow impact due to pricing, safety constraints, or tool limitations.

4. Regulation and Safety Shocks

Major incidents could force autonomy throttling, slowing adoption of always-on agents.

5. Hardware & Inference Economics

If inference costs fall slower than expected, autonomy expansion may stall. If they fall faster, governance demand accelerates—but margins compress.

Strategic Takeaways for 2026

From my analysis, three decision-oriented conclusions stand out:

Favor companies that own workflow distribution and demonstrate measurable task success metrics—not just model access.

Treat milestone repricing as a sentiment radar; probability compression often precedes broader capital rotation.

Underwrite winners based on their ability to operationalize autonomy—governance by default, tool safety, and cost-per-successful-task optimization.

Conclusion: The Real AI Race in 2026

The Post-OpenClaw era redefines competitive advantage in AI.

The next phase is not about who builds the smartest base model. It is about who can deploy reliable, governed, cost-efficient autonomy at scale.

Model releases will continue to shape headlines. But execution quality, governance infrastructure, and distribution ownership will determine long-term winners.

Using structured milestone tracking and workflow-level analytics—especially through tools like Powerdrill Bloom—makes it increasingly clear that 2026 will reward operational discipline over benchmark hype.

The AI race is no longer theoretical. It is infrastructural.

Disclaimer: This article reflects forward-looking analysis and probability-based forecasting and does not constitute investment advice.