At the start of 2026, I found myself returning to a question that quietly sits at the center of almost every conversation in the AI ecosystem: who will actually be perceived as having the strongest frontier AI model when February comes to a close?

To ground this forecast, I structured my analysis using Powerdrill Bloom, which allowed me to systematically compare release cadence, capability signals, and real-world deployment patterns across leading AI labs.

This topic preview image was automatically generated by Powerdrill Bloom in response to my query. What follows is not a hype-driven ranking, but a probability-weighted view of how this race is most likely to resolve by the end of February 2026.

1. The Core Prediction

Before naming a winner, I need to be explicit about what I mean by “the strongest AI model.” For this forecast, my operational definition is intentionally practical rather than academic.

By strongest, I mean the frontier system that performs best overall across four dimensions by the end of February 2026:

General reasoning and breadth of knowledge

Agentic coding and tool-using autonomy

Robustness, instruction-following, and stability

Demonstrated maturity in real-world deployment (not just demos)

Under this definition, my base-case call is clear:

OpenAI is the most likely lab to be perceived as holding the strongest frontier AI model at the end of February 2026, with Google DeepMind remaining a very close second.

The emphasis here is important: frontier leadership is often decided not by who was strongest last quarter, but by who ships and stabilizes the most capable system closest to the evaluation window.

2. A Probability-Based View of the Frontier Model Race

2.1 End-State Probability Distribution

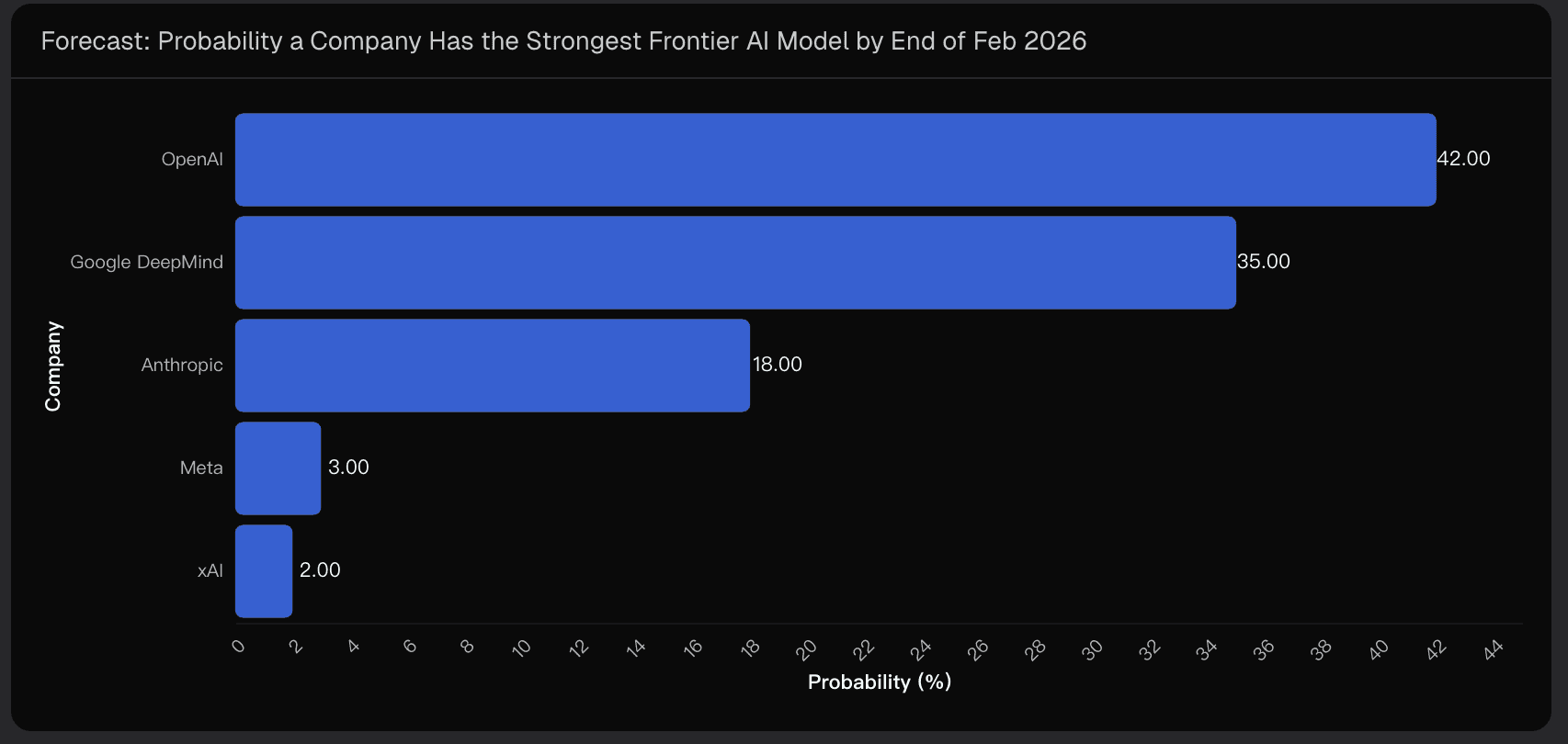

Rather than framing this as a binary winner-takes-all outcome, I prefer a market-style probability view. Based on current signals and execution patterns, my point estimates look like this:

OpenAI: 42%

Google DeepMind: 35%

Anthropic: 18%

Meta: 3%

xAI: 2%

These probabilities are intentionally tight at the top. In frontier AI, the distance between #1 and #2 is often a single release or a single evaluation suite.

2.2 How to Read These Probabilities

These numbers do not answer the question “who is best today.” They are end-February probabilities, conditioned on:

Historical base rates of frontier leadership

Recency weighting from late 2025 through early 2026

Execution and delivery risk

Definition risk around what evaluators treat as “strongest”

In other words, this is a forecast of how the narrative and benchmarks are most likely to land, not a static measurement of raw capability.

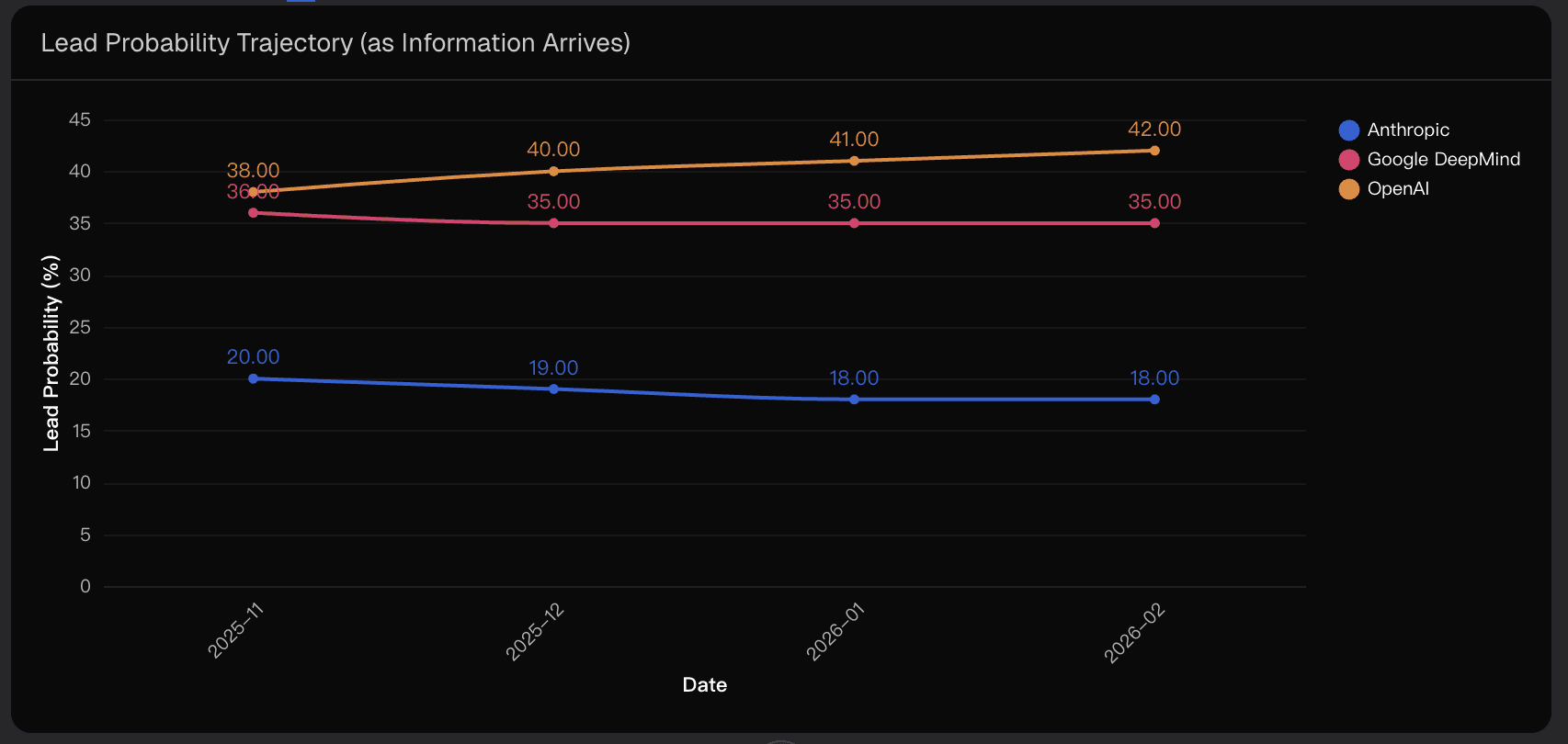

2.3 Why the Forecast Is Stable but Not Locked

As new releases and third-party comparisons arrive, probabilities tend to update incrementally, not violently. Both OpenAI and Google operate continuous pipelines with the ability to ship meaningful upgrades late in the cycle.

That dynamic keeps the race close—and ensures no lead is ever fully safe.

3. Evidence and Drivers Behind the Forecast

3.1 OpenAI’s Execution Advantage Into February 2026

The single strongest signal supporting OpenAI’s lead is execution timing.

Public release patterns point to continued iteration on the GPT-5 line—including advanced “thinking” configurations—alongside new Codex-family releases in early February 2026. That matters because frontier leadership is frequently awarded to the lab that ships and stabilizes the most capable snapshot closest to the cutoff date.

Capability leadership is often a function of when you ship, not just how good you were earlier.

3.2 Agentic Coding as a Perception Multiplier

Another major driver is OpenAI’s acceleration in agentic coding and tool-using autonomy. Coding agents have become a core axis for what practitioners now mean by “strongest model,” because they translate abstract reasoning into visible productivity.

Even if Google leads on specific academic or multimodal benchmarks, OpenAI can still “win the month” if it clearly outperforms on end-to-end task execution with fewer interventions and fewer critical failures.

3.3 Google DeepMind’s Best Counter-Case

Google DeepMind remains the most credible alternative winner.

With the Gemini 3 generation, Google has positioned itself around scale, long context, multimodal reasoning, and deeply integrated distribution across Search and developer platforms. This creates a powerful reframing risk: evaluators may increasingly define “strongest” as the most capable assistant people can actually use everywhere.

That reframing is exactly why Google holds a meaningful 35% probability rather than trailing far behind.

3.4 Anthropic’s Path to a Narrative Flip

Anthropic sits in a genuinely interesting position.

If a new flagship release clearly dominates hard reasoning tasks, reliability, and real-world stability—and if that dominance shows up consistently on widely cited third-party evaluations—the narrative can flip fast. Anthropic’s challenge is not technical talent; it’s that frontier leadership narratives often overweight breadth, cadence, and distribution.

That said, a single step-change release is enough to reset the leaderboard.

3.5 Why Meta and xAI Remain Low Probability for This Window

This is not a statement about long-term potential.

The constraint is timing. An end-February call favors teams already shipping frequent frontier updates and capturing evaluator attention in a tight window. Public validation and benchmark acceptance matter just as much as raw capability—and those mechanisms take time to compound.

4. Key Uncertainties That Could Change the Outcome

4.1 Definition Risk Is the Biggest Swing Factor

The winner depends heavily on how “strongest” is implicitly defined:

Multimodal depth and long context favor Google

Reasoning and agentic coding favor OpenAI

Reliability and low-hallucination performance favor Anthropic

The crowd’s scoring function ultimately decides the outcome.

4.2 Benchmark Volatility Near the Finish Line

Third-party leaderboards are noisy. Prompting differences, system access, versioning, and sampling variance can all distort rankings. A single late-February leaderboard update can flip sentiment even when underlying differences are modest.

4.3 Release Timing Risk

The most impactful event remains a late-month release that decisively outperforms on the metrics people care about. Both OpenAI and Google retain the ability to ship an “end-of-month closer,” which keeps this race tight.

5. Conclusion: The Most Likely Narrative by Late February 2026

My base case is that OpenAI leads the end-February 2026 “strongest AI model” narrative, driven by rapid frontier iteration and momentum in agentic coding. Google DeepMind remains a close second, with a real chance to win if multimodal capability and integrated deployment dominate evaluator priorities. Anthropic represents the high-upside spoiler if reliability and hard problem-solving become the defining benchmark.

This forecast—and the probabilities behind it—was structured and stress-tested using Powerdrill Bloom to ensure the conclusion reflects signals, not sentiment.

Disclaimer: This article represents analytical opinion and forward-looking interpretation, not factual claims or investment advice.